The AMD FreeSync Review

by Jarred Walton on March 19, 2015 12:00 PM ESTFreeSync Features

In many ways FreeSync and G-SYNC are comparable. Both refresh the display as soon as a new frame is available, at least within their normal range of refresh rates. There are differences in how this is accomplished, however.

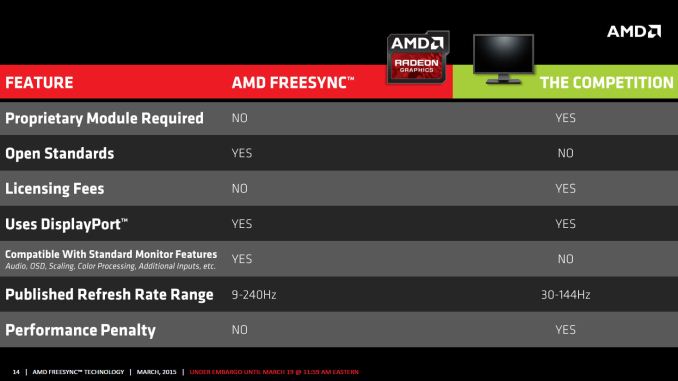

G-SYNC uses a proprietary module that replaces the normal scaler hardware in a display. Besides cost factors, this means that any company looking to make a G-SYNC display has to buy that module from NVIDIA. Of course the reason NVIDIA went with a proprietary module was because adaptive sync didn’t exist when they started working on G-SYNC, so they had to create their own protocol. Basically, the G-SYNC module controls all the regular core features of the display like the OSD, but it’s not as full featured as a “normal” scaler.

In contrast, as part of the DisplayPort 1.2a standard, Adaptive Sync (which is what AMD uses to enable FreeSync) will likely become part of many future displays. The major scaler companies (Realtek, Novatek, and MStar) have all announced support for Adaptive Sync, and it appears most of the changes required to support the standard could be accomplished via firmware updates. That means even if a display vendor doesn’t have a vested interest in making a FreeSync branded display, we could see future displays that still work with FreeSync.

Having FreeSync integrated into most scalers has other benefits as well. All the normal OSD controls are available, and the displays can support multiple inputs – though FreeSync of course requires the use of DisplayPort as Adaptive Sync doesn’t work with DVI, HDMI, or VGA (DSUB). AMD mentions in one of their slides that G-SYNC also lacks support for audio input over DisplayPort, and there’s mention of color processing as well, though this is somewhat misleading. NVIDIA's G-SYNC module supports color LUTs (Look Up Tables), but they don't support multiple color options like the "Warm, Cool, Movie, User, etc." modes that many displays have; NVIDIA states that the focus is on properly producing sRGB content, and so far the G-SYNC displays we've looked at have done quite well in this regard. We’ll look at the “Performance Penalty” aspect as well on the next page.

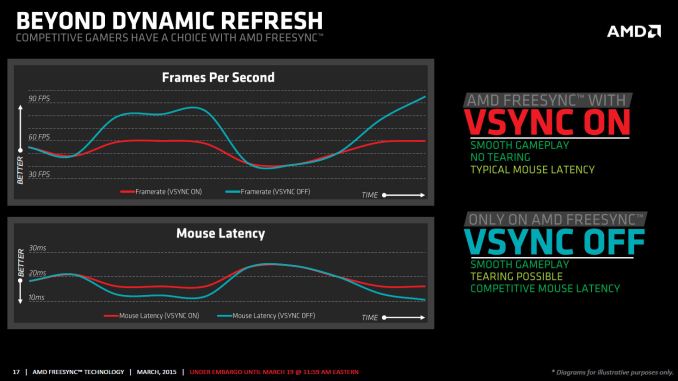

One other feature that differentiates FreeSync from G-SYNC is how things are handled when the frame rate is outside of the dynamic refresh range. With G-SYNC enabled, the system will behave as though VSYNC is enabled when frame rates are either above or below the dynamic range; NVIDIA's goal was to have no tearing, ever. That means if you drop below 30FPS, you can get the stutter associated with VSYNC while going above 60Hz/144Hz (depending on the display) is not possible – the frame rate is capped. Admittedly, neither situation is a huge problem, but AMD provides an alternative with FreeSync.

Instead of always behaving as though VSYNC is on, FreeSync can revert to either VSYNC off or VSYNC on behavior if your frame rates are too high/low. With VSYNC off, you could still get image tearing but at higher frame rates there would be a reduction in input latency. Again, this isn't necessarily a big flaw with G-SYNC – and I’d assume NVIDIA could probably rework the drivers to change the behavior if needed – but having choice is never a bad thing.

There’s another aspect to consider with FreeSync that might be interesting: as an open standard, it could potentially find its way into notebooks sooner than G-SYNC. We have yet to see any shipping G-SYNC enabled laptops, and it’s unlikely most notebooks manufacturers would be willing to pay $200 or even $100 extra to get a G-SYNC module into a notebook, and there's the question of power requirements. Then again, earlier this year there was an inadvertent leak of some alpha drivers that allowed G-SYNC to function on the ASUS G751j notebook without a G-SYNC module, so it’s clear NVIDIA is investigating other options.

While NVIDIA may do G-SYNC without a module for notebooks, there are still other questions. With many notebooks using a form of dynamic switchable graphics (Optimus and Enduro), support for Adaptive Sync by the Intel processor graphics could certainly help. NVIDIA might work with Intel to make G-SYNC work (though it’s worth pointing out that the ASUS G751 doesn’t support Optimus so it’s not a problem with that notebook), and AMD might be able to convince Intel to adopt DP Adaptive Sync, but to date neither has happened. There’s no clear direction yet but there’s definitely a market for adaptive refresh in laptops, as many are unable to reach 60+ FPS at high quality settings.

350 Comments

View All Comments

P39Airacobra - Monday, March 23, 2015 - link

Why will it not work with the R9 270? That is BS! To hell with you AMD! I paid good money for my R9 series card! And it was supposed to be current GCN not GCN 1.0! Not only do you have to deal with crap drivers that cause artifacts! Now AMD is pulling off marketing BS!Morawka - Tuesday, March 24, 2015 - link

Anandtech, have you seen the PCPerspective article on Gsync vs Freesync? PCper was seeing ghosting with freesync. Can you guys coo-berate their findings?shadowjk - Tuesday, March 24, 2015 - link

Am I the only one who would want a 24" ish 1080p IPS screen with gsync or freesync?xenol - Tuesday, March 24, 2015 - link

FreeSync and GSync shouldn't have ever happened.The problem I have is "syncing" is a relic of the past. The only reason why you needed to sync with a monitor is because they were using CRTs that could only trace the screen line by line. It just kept things simpler (or maybe practical) if you weren't trying to fudge with the timing of that on the fly.

Now, you can address each individual pixel. There's no need to "trace" each line. DVI should've eliminated this problem because it was meant for LCD's. But no, in order to retain backwards compatibility, DVI's data stream behaves exactly like VGA's. DisplayPort finally did away with this by packetizing the data, which I hope means that display controllers only change what they need to change, not "refresh" the screen. But given they still are backwards compatible with DVI, I doubt that's the case.

Get rid of the concept of refresh rates and syncing altogether. Stop making digital displays behave like CRTs.

Mrwright - Wednesday, March 25, 2015 - link

Why do i need either Freesync or Gsync when I already get over 100fps in all games at 2560x1400. All i want is a 144Hz 2560x1440 monitor without the Gsync tax. as gsync and freesync are only usefull if you drop below 60fps.ggg000 - Thursday, March 26, 2015 - link

Freesync is a joke:https://www.youtube.com/watch?feature=player_embed...

https://www.youtube.com/watch?v=VJ-Pc0iQgfk&fe...

https://www.youtube.com/watch?v=1jqimZLUk-c&fe...

https://www.youtube.com/watch?feature=player_embed...

https://www.youtube.com/watch?v=84G9MD4ra8M&fe...

https://www.youtube.com/watch?v=aTJ_6MFOEm4&fe...

https://www.youtube.com/watch?v=HZtUttA5Q_w&fe...

ghosting like hell.

willis936 - Tuesday, August 25, 2015 - link

LCD is a memory array. If you don't use it you lose it. Need to physically refresh each pixel the same number of times a second. You could save on average bitrate by only sending changed pixels but that requires more work on the gpu and adds latency. What's more is it doesn't change the fact what your max bitrate needs to be and don't even bigger suggesting multiple frame buffers as that adds TV tier latency.ggg000 - Thursday, March 26, 2015 - link

Freesync is a joke:https://www.youtube.com/watch?feature=player_embed...

https://www.youtube.com/watch?v=VJ-Pc0iQgfk&fe...

https://www.youtube.com/watch?v=1jqimZLUk-c&fe...

https://www.youtube.com/watch?feature=player_embed...

https://www.youtube.com/watch?v=84G9MD4ra8M&fe...

https://www.youtube.com/watch?v=aTJ_6MFOEm4&fe...

https://www.youtube.com/watch?v=HZtUttA5Q_w&fe...

ghosting like hell.

chizow - Monday, March 30, 2015 - link

And more evidence of FreeSync's (and AnandTech's) shortcomings, again from PCPer. I remember a time AnandTech was willing to put in the work with the kind of creativeness needed to come to such conclusions, but I guess this is what happens when the boss retires and takes a gig with Apple.http://www.pcper.com/reviews/Graphics-Cards/Dissec...

PCPer is certainly the go-to now for any enthusiast that wants answers beyond the superficial spoon-fed vendor stories.

ZmOnEy132 - Saturday, December 17, 2016 - link

Free sync is not meant to increase fps. The whole point is visuals. It stops visual tearing which is why it drops frame rates to match the monitor. Fps has no effect on what free sync is meant to do. It's all visuals not performance. I hate when people write reviews that don't know what they're talking about. You're gonna get dropped frame rates because that means the frame isn't ready yet so the GPU doesn't give it to the display and holds onto it a tiny bit longer to make sure the monitor and GPU are both ready for that frame.