AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

The Return of Supersample AA

Over the years, the methods used to implement anti-aliasing on video cards have bounced back and forth. The earliest generation of cards such as the 3Dfx Voodoo 4/5 and ATI and NVIDIA’s DirectX 7 parts implemented supersampling, which involved rendering a scene at a higher resolution and scaling it down for display. Using supersampling did a great job of removing aliasing while also slightly improving the overall quality of the image due to the fact that it was sampled at a higher resolution.

But supersampling was expensive, particularly on those early cards. So the next generation implemented multisampling, which instead of rendering a scene at a higher resolution, rendered it at the desired resolution and then sampled polygon edges to find and remove aliasing. The overall quality wasn’t quite as good as supersampling, but it was much faster, with that gap increasing as MSAA implementations became more refined.

Lately we have seen a slow bounce back to the other direction, as MSAA’s imperfections became more noticeable and in need of correction. Here supersampling saw a limited reintroduction, with AMD and NVIDIA using it on certain parts of a frame as part of their Adaptive Anti-Aliasing(AAA) and Supersample Transparency Anti-Aliasing(SSTr) schemes respectively. Here SSAA would be used to smooth out semi-transparent textures, where the textures themselves were the aliasing artifact and MSAA could not work on them since they were not a polygon. This still didn’t completely resolve MSAA’s shortcomings compared to SSAA, but it solved the transparent texture problem. With these technologies the difference between MSAA and SSAA were reduced to MSAA being unable to anti-alias shader output, and MSAA not having the advantages of sampling textures at a higher resolution.

With the 5800 series, things have finally come full circle for AMD. Based upon their SSAA implementation for Adaptive Anti-Aliasing, they have re-implemented SSAA as a full screen anti-aliasing mode. Now gamers can once again access the higher quality anti-aliasing offered by a pure SSAA mode, instead of being limited to the best of what MSAA + AAA could do.

Ultimately the inclusion of this feature on the 5870 comes down to two matters: the card has lots and lots of processing power to throw around, and shader aliasing was the last obstacle that MSAA + AAA could not solve. With the reintroduction of SSAA, AMD is not dropping or downplaying their existing MSAA modes; rather it’s offered as another option, particularly one geared towards use on older games.

“Older games” is an important keyword here, as there is a catch to AMD’s SSAA implementation: It only works under OpenGL and DirectX9. As we found out in our testing and after much head-scratching, it does not work on DX10 or DX11 games. Attempting to utilize it there will result in the game switching to MSAA.

When we asked AMD about this, they cited the fact that DX10 and later give developers much greater control over anti-aliasing patterns, and that using SSAA with these controls may create incompatibility problems. Furthermore the games that can best run with SSAA enabled from a performance standpoint are older titles, making the use of SSAA a more reasonable choice with older games as opposed to newer games. We’re told that AMD will “continue to investigate” implementing a proper version of SSAA for DX10+, but it’s not something we’re expecting any time soon.

Unfortunately, in our testing of AMD’s SSAA mode, there are clearly a few kinks to work out. Our first AA image quality test was going to be the railroad bridge at the beginning of Half Life 2: Episode 2. That scene is full of aliased metal bars, cars, and trees. However as we’re going to lay out in this screenshot, while AMD’s SSAA mode eliminated the aliasing, it also gave the entire image a smooth makeover – too smooth. SSAA isn’t supposed to blur things, it’s only supposed to make things smoother by removing all aliasing in geometry, shaders, and textures alike.

As it turns out this is a freshly discovered bug in their SSAA implementation that affects newer Source-engine games. Presumably we’d see something similar in the rest of The Orange Box, and possibly other HL2 games. This is an unfortunate engine to have a bug in, since Source-engine games tend to be heavily CPU limited anyhow, making them perfect candidates for SSAA. AMD is hoping to have a fix out for this bug soon.

“But wait!” you say. “Doesn’t NVIDIA have SSAA modes too? How would those do?” And indeed you would be right. While NVIDIA dropped official support for SSAA a number of years ago, it has remained as an unofficial feature that can be enabled in Direct3D games, using tools such as nHancer to set the AA mode.

Unfortunately NVIDIA’s SSAA mode isn’t even in the running here, and we’ll show you why.

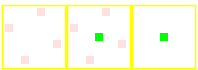

5870 SSAA

GTX 280 MSAA

GTX 280 SSAA

At the top we have the view from DX9 FSAA Viewer of ATI’s 4x SSAA mode. Notice that it’s a rotated grid with 4 geometry samples (red) and 4 texture samples. Below that we have NVIDIA’s 4x MSAA mode, a rotated grid with 4 geometry samples and a single texture sample. Finally we have NVIDIA’s 4x SSAA mode, an ordered grid with 4 geometry samples and 4 texture samples. For reasons that we won’t get delve into, rotated grids are a better grid layout from a quality standpoint than ordered grids. This is why early implementations of AA using ordered grids were dropped for rotated grids, and is why no one uses ordered grids these days for MSAA.

Furthermore, when actually using NVIDIA's SSAA mode, we ran into some definite quality issues with HL2: Ep2. We're not sure if these are related to the use of an ordered grid or not, but it's a possibility we can't ignore.

If you compare the two shots, with MSAA 4x the scene is almost perfectly anti-aliased, except for some trouble along the bottom/side edge of the railcar. If we switch to SSAA 4x that aliasing is solved, but we have a new problem: all of a sudden a number of fine tree branches have gone missing. While MSAA properly anti-aliased them, SSAA anti-aliased them right out of existence.

For this reason we will not be taking a look at NVIDIA’s SSAA modes. Besides the fact that they’re unofficial in the first place, the use of a rotated grid and the problems in HL2 cement the fact that they’re not suitable for general use.

327 Comments

View All Comments

SiliconDoc - Wednesday, September 30, 2009 - link

I was here before this site was even on the map let alone on your radar, and have NEVER had any other acct name.I will wait for your APOLOGY.

ol1bit - Friday, September 25, 2009 - link

Goodbye 8800gt SLI... nothing has given me the bang for the buck upgrade that this card does!I paid $490 for my SLI 8800Gt's in 11/07

$379 Sweetness!

Brazos - Thursday, September 24, 2009 - link

I always get nostalgic for Tech TV when a new gen of video cards come out. Watching Leo, Patrick, et al. discuss the latest greatest was like watching kids on Christmas morning. And of course there was Morgan.totenkopf - Thursday, September 24, 2009 - link

SiliconDoc, this is pathetic. Why are you so upset? No one cares about arguing the semantics of hard or paper launches. Besides, where the F is Nvidias Gt300 thingy? You post here more than amd fanboys, yet you hate amd... just hibernate until the gt300 lauunches and then you can come back and spew hatred again.Seriously... the fact that you cant even formulate a cogent argument based on anything performance related tells me that you have already ceded the performance crown to amd. Instead, you've latched onto this red herring, the paper launch crap. stop it. just stop it. You're like a crying child. Please just be thankful that amd is noww allowing you to obtain more of your nvidia panacea for even less money!

Hooray competition! EVERYONE WINS! ...Except silicon doc. He would rather pay $650 for a 280 than see ati sell one card. Ati is the best thing that ever happened to nvidia (and vice versa) Grow the F up and dont talk about bias unless you have none yourself. Hope you dont electrocute yourself tonight while making love to you nvidia card.

SiliconDoc - Thursday, September 24, 2009 - link

" Hooray competition! EVERYONE WINS! ...Except silicon doc. He would rather pay $650 for a 280 than see ati sell one card."And thus you have revealed your deep seated hatred of nvidia, in the common parlance seen.

Frankly my friend, I still have archived web pages with $500 HD2900XT cards from not that long back, that would easily be $700 now with the inflation we've seen.

So really, wnat is your red raving rooster point other than you totally excuse ATI tnat does exactly the same thing, and make your raging hate nvidia whine, as if "they are standalone guilty".

You're ANOTHER ONE, that repeats the same old red fan cleche's, and WON'T OWN UP TO ATI'S EXACT SAME BEHAVIOR ! Will you ? I WANT TO SEE IT IN TEXT !

In other words, your whole complaint is INVALID, because you apply it exclusively, in a BIASED fashion.

Now tell me about the hundres of dollars overpriced ati cards, won't you ? No, you won't. See that is the problem.

silverblue - Friday, September 25, 2009 - link

If you think companies are going to survive without copying what other companies do, you're sadly mistaken.Yes, nVidia has made advances, but so has ATI. When nVidia brought out the GF4 Ti series, it supported Pixel Shader 1.3 whereas ATI's R200-powered 8500 came out earlier with the more advanced Pixel Shader 1.4. ATI were the first of the two companies to introduce a 256-bit memory bus on their graphics cards (following Matrox). nVidia developed Quincunx, which I still hold in high regard. nVidia were the first to bring out Shader Model 3. I still don't know of any commercially available nVidia cards with GDDR5.

We could go on comparing the two but it's essential that you realise that both companies have developed technologies that have been adopted by the other. However, we wouldn't be so far down this path without an element of copying.

The 2900XT may be overpriced because it has GDDR4. I'm not interested in it and most people won't be.

"In other words, your whole complaint is INVALID, because you apply it exclusively, in a BIASED fashion. " Funny, I thought we were seeing that an nauseum from you?

Why did I buy my 4830? Because it was cheaper than the 9800GT and performed at about the same level. Not because I'm a "red rooster".

ATI may have priced the 5870 a little high, but in terms of its pure performance, it doesn't come too far off the 295 - a card we know to have two GPUs and costs more. In the end, perhaps AMD crippled it with the 256-bit interface, but until they implement one you'll be convinced that it's a limitation. Maybe, maybe not. GT300 may just prove AMD wrong.

SiliconDoc - Wednesday, September 30, 2009 - link

You have absolutely zero proof that we wouldn't be further down this path without the "competition".Without a second company or third of fourth or tenth, the monopoly implements DIVISIONS that complete internally, and without other companies, all the intellectual creativity winds up with the same name on their paycheck.

You cannot prove what you say has merit, even if you show me a stagnant monopoly, and good luck doing that.

As ATI stagnated for YEARS, Nvidia moved AHEAD. Nvidia is still ahead.

In fact, it appears they have always been ahead, much like INTEL.

You can compare all you want but "it seems ati is the only one interested in new technology..." won't be something you'll be blabbing out again soon.

Now you try to pass a lesson, and JARED the censor deletes responses, because you two tools think you have a point this time, but only with your deleting and lying assumptions.

NEXT TIME DON'T WAIL ATI IS THE ONLY ONE THAT SEEMS INTERESTED IN IMPLEMENTING NEW TECHGNOLOGY.

DON'T SAY IT THEN BACKTRACK 10,000 % WHILE TRYING TO "TEACH ME A LESSON".

You're the one whose big far red piehole spewed out the lie to begin with.

Finally - Friday, September 25, 2009 - link

The term "Nvidiot" somehow sprung to my mind. How come?silverblue - Thursday, September 24, 2009 - link

Youre spot on about his bias. Every single post consists of trash-talking pretty much every ATI card and bigging up the comparative nVidia offering. I think the only product he's not complained about is the 4770, though oddly enough that suffered horrific shortage issues due to (surprise) TSMC.Even if there were 58x0 cards everywhere, he'd moan about the temperature or the fact it should have a wider bus or that AMD are finally interested in physics acceleration in a proper sense. I'll concede the last point but in my opinion, what we have here is a very good piece of technology that will (like CPUs) only get better in various aspects due to improving manufacturing processes. It beats every other single GPU card with little effort and, when idle, consumes very little juice. The technology is far beyond what RV770 offers and at least, unlike nVidia, ATI seems more interested in driving standards forward. If not for ATI, who's to say we'd have progressed anywhere near this far?

No company is perfect. No product is perfect. However, to completely slander a company or division just because he buys a competitor's products is misguided to say the least. Just because I own a PC with an AMD CPU, doesn't mean I'm going to berate Intel to high heaven, even if their anti-competitive practices have legitimised such criticism. nVidia makes very good products, and so does ATI. They each have their own strengths and weaknesses, and I'd certainly not be using my 4830 without the continued competition between the two big performance GPU manufacturers; likewise, SiliconDoc's beloved nVidia-powered rig would be a fair bit weaker (without competition, would it even have PhysX? I doubt it).

SiliconDoc - Thursday, September 24, 2009 - link

Well, that was just amazing, and you;re wrong about me not complaining about the 4770 paper launch, you missed it.I didn't moan about the temperature, I moaned about the deceptive lies in the review concerning temperatures, that gave ATI a complete pass, and failed to GIVE THE CREDIT DUE THAT NVIDIA DESERVES because of the FACTS, nothing else.

The article SPUN the facts into a lying cobweb of BS. Juzt like so many red fans do in the posts, and all over the net, and you've done here. It is so hard to MAN UP and admit the ATI cards run hotter ? Is is that bad for you, that you cannot do it ? Certainly the article FAILED to do so, and spun away instead.

Next, you have this gem " at least, unlike nVidia, ATI seems more interested in driving standards forward."

ROFLMAO - THIS IS WHAT I'M TALKING ABOUT.

Here, let me help you, another "banned" secret that the red roosters keep to their chest so their minions can spew crap like you just did: ATI STOLE THE NVIDIA BRIDGE TECHNOLOGY, ATI HAD ONLY A DONGLE OUTSIDE THE CASE, WHILE NVIDIA PROGRESSED TO INTERNAL BRIDGE. AFTER ATI SAW HOW STUPID IT WAS, IT COPIED NVIDIA.

See, now there's one I'll bet a thousand bucks you never had a clue about.

I for one, would NEVER CLAIM that either company had the lock on "forwarding technbology", and I IN FACT HAVE NEVER DONE SO, EVER !

But you red fans spew it all the time. You spew your fanboyisms, in fact you just did, that are absolutely outrageous and outright red leaning lies, period!

you: " at least, unlike nVidia, ATI seems more interested in driving standards forward...."

I would like to ask you, how do you explain the never before done MIMD core Nvidia has, and will soon release ? How can you possibly say what you just said ?

If you'd like to give credit to ATI going with DRR4 and DDR5 first, I would have no problem, but you people DON'T DO THAT. You take it MUCH FURTHER, and claim, as you just did, ATI moves forward and nvidia does not. It's a CONSTANT REFRAIN from you people.

Did you read the article and actually absorb the OpenCL information ? Did you see Nvidia has an implementation, is "ahead" of ati ? Did you even dare notice that ? If not, how the hell not, other than the biased wording the article has, that speaks to your emotionally charged hate Nvidia mindset :

"However, to completely slander a company or division just because he buys a competitor's products is misguided to say the least."

That is NOT TRUE for me, as you stated it, but IT IS TRUE FOR YOU, isn't it ?

---

You in fact SLANDERED Nvidia, by claiming only ATI drives forward tech, or so it seems to you...

I've merely been pointing out the many statements all about like you just made, and their inherent falsehood!

---

Here next, you pull the ol' switcharoo, and do what you say you won't do, by pointing out you won't do it! roflmao: " doesn't mean I'm going to berate Intel to high heaven, even if their anti-competitive practices have legitimised such criticism.."

Well, you just did berate them, and just claimed it was justified, cinching home the trashing quickly after you claimed you wouldn't, but have utterly failed to point out a single instance, unlike myself- I INCLUDE the issues and instances, pointing them out imtimately and often in detail, like now.

LOL you: " I'd certainly not be using my 4830 without ...."

Well, that shows where you are coming from, but you're still WRONG. If either company dies, the other can move on, and there's very little chance that the company will remain stagnant, since then they won't sell anything, and will die, too.

The real truth about ATI, which I HAVE pointed out before, is IT FELL OFF THE MAP A FEW YEARS BACK AND ALTHOUGH PRIOR TO THAT TIME WAS COMPETITIVE AND PERHAPS THE VERY BEST, IT CAVED IN...

After it had it's "dark period" of failure and depair, where Nvidia had the lone top spot, and even produced the still useful and amazing GTX8800 ultimate (with no competition of any note in sight, you failed to notice, even to this day - and claim the EXACT OPPOSITE- because you, a dead brained red, bought the "rebrand whine" lock stock and barrel), ATI "re-emerged", and in fact, doesn't rteally deserve praise for falling off the wagon for a year or two.

See, that's the truth. The big fat red fib, you liars can stop lying about is the "stagnant technology without competition" whine.

ATI had all the competition it could ever ask for, and it EPIC FAILED for how many years ? A couple, let's say, or one if you just can't stand the truth, and NVIDIA, not stagnated whatsoever, FLEW AHEAD AND RELEASED THE MASSIVE GTX8800 ULTIMATE.

So really friend, just stop the lying. That's all I ask. Quit repeating the trashy and easily disproved ati cleche's.

Ok ?