AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

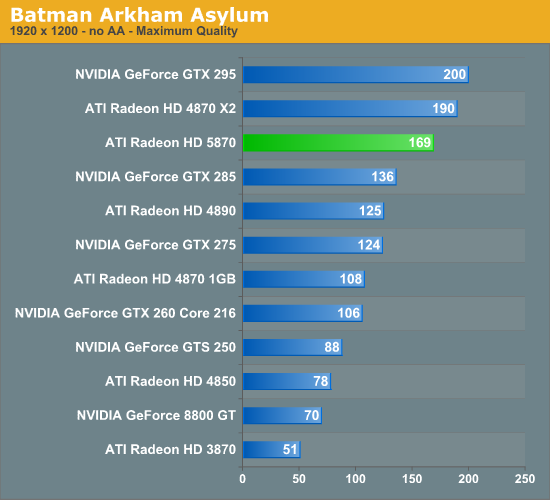

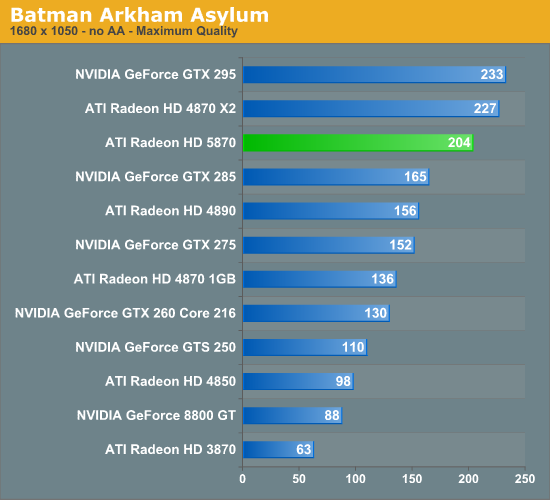

Batman: Arkham Asylum

Batman: Arkham Asylum is another brand-new PC game, and has been burning up the review charts. It’s an Unreal Engine 3 based game, something that’s not immediately obvious from just looking at it, which is rare for UE3 based games.

NVIDIA has put a lot of marketing muscle into the game as part of their The Way It’s Meant to Be Played program, and as a result it ships with PhysX support and 3D Vision support. Unfortunately NVIDIA’s influence has extended to its anti-aliasing abilities too, as its in-game selective AA abilities only work on NVIDIA’s cards. AMD’s cards can perform AA on the game, but only via traditional full screen anti-aliasing, which isn’t nearly as efficient. Because of this, this is the only game where we will not be using AA, as doing so produces meaningless results given the different AA modes used.

Without the use of AA, the performance in this game is best described as “runaway”. The 5870 turns in a score of 102fps, and even the GTS 250 can do just 53fps. However we’re also seeing the 5870’s performance pattern maintained here: it beats the single-GPU cards and loses to the multi-GPU cards.

327 Comments

View All Comments

Zool - Friday, September 25, 2009 - link

"The GT300 is going to blow this 5870 away - the stats themselves show it, and betting otherwise is a bad joke, and if sense is still about, you know it as well."If not than it would be a sad day for nvidia after more than 2 years of nothing.

But the 5870 can still blow away any other card around. With DX11 fixed tesselators in pipeline and compute shader postprocessing (which will finaly tax the 1600 stream procesors)it will look much better than curent dx9. The main advantage of this card is the dx11 which of course nvidia doesnt hawe. And maybe the dewelopers will finaly optimize for the ati vector architecture if there isnt any other way meant to be played(payed) :).

Actualy nvidia couldnt shrink the last gen to 40nm with its odd high frequency scalar shaders (which means much more leakage) so i wouldent expect much more from nvidia than double the stats and make dx11 either.

SiliconDoc - Friday, September 25, 2009 - link

Here more new NVidia cards, so the fasle 2 years complaint is fixzling fast.Why this hasn't been mentioned here at anadtech yet I'm not sure, but of course...

http://www.fudzilla.com/content/view/15698/1/">http://www.fudzilla.com/content/view/15698/1/

Gigabyte jumps the gun with 40nm

According to our info, Nvidia's GT 220, 40nm GT216-300 GPU, will be officially announced in about three weeks time.

The Gigabyte GT 220 works at 720MHz for the core and comes with 1GB of GDDR3 memory clocked at 1600MHz and paired up with a 128-bit memory interface. It has 48 stream processors with a shader clock set at 1568MHz.

--

See, there's a picture as well. So, it's not like nothing has been going on. It's also not "a panicky response to regain attention" - it is the natural progression of movement to newer cores and smaller nm processes, and those things take time, but NOT the two years plus ideas that spread around....

SiliconDoc - Friday, September 25, 2009 - link

The GT200 was released on June 16th and June17th, 2008, definitely not more than 2 years ago, but less.The 285 (nm die shrink) on January 15th, 2009, less than 1 year ago.

The 250 on March 3rd this year, though I wouldn't argue you not wanting to count that.

I really don't think I should have to state that you are so far off the mark, it is you, not I, that might heal with correction.

Next, others here, the article itself, and the ati fans themselves won't even claim the 5870 blows away every other card, so that statement you made is wrong, as well.

You could say every other single core card - no problem.

Let's hope the game scenes do look much better, that would be wonderful, and a fine selling point for W7 and DX11 (and this casrd if it is, or remains, the sole producer of your claim).

I suggest you check around, the DX11 patch for Battleforge is out, so maybe you can find confirmation of your "better looking" games.

" Actualy nvidia couldnt shrink the last gen to 40nm with its odd high frequency scalar shaders "

I believe that is also wrong, as they are doing so for mobile.

What is true is ATI was the ginea pig for 40nm, and you see, their yeilds are poor, while NVidia, breaking in later, has had good yeilds on the GT300, as stated by - Nvidia directly, after ATI made up and spread lies about 9 or less per wafer. (that link is here, I already posted it)

---

If Nvidia's card doubles performance of the 285, twice the frames at the same reso and settings, I will be surprised, but the talk is, that or more. The stats leaked make that a possibility. The new MIMD may be something else, I read 6x-15x the old performance in certain core areas.

What Nvidia is getting ready to release is actually EXCITING, is easily described as revolutionary for videocards, so yes, a flop would be a very sad day for all of us. I find it highly unlikely, near impossible, the silicon is already being produced.

The only question yet is how much better.

Zool - Friday, September 25, 2009 - link

I want to note also that the 5870 is hard to find which means the 40nm tsmc is still far from the last gen 55nm. After they manage it to get on the 4800 level the prices will be different. And maybe the gt300 is still far away.mapesdhs - Friday, September 25, 2009 - link

Is there any posting moderation here? Some of the flame/troll posts

are getting ridiculous. The same thing is happening on toms these days.

Jarred, best not to reply IMO. I don't think you're ever going to get

a logical response. Such posts are not made with the intent of having

a rational discussion. Remember, as Bradbury once said, it's a sad

fact of nature that half the population have got to be below average. :D

Ian.

Zool - Friday, September 25, 2009 - link

I would like to see some more tests on power load. Actualy i dont think there are too many people with 2560*1600 displays. There are still plenty people with much lower resolutions like 1680*1050 and play with max imagequality. With VSync on if u reduce 200fps to 60fps u get much less gpu temps and also power load.(things like furmark are miles away from real world) On LCD there is no need to turn it off just if u benchmark and want more fps. I would like to see more power load tests with diferent resolutions and Vsync on.(And of course not with crysis)Also some antialiasing tests, the adaptive antialiasing on 5800 is now much faster i read.And the FSAA is of course blurry, it was always so. If u render the image in 5120*3200 on your 2560*1600 display and than it combines the quad pixels it will seems like its litle washed out. Also in those resolution even the highress textures will begin to pixalate even in closeup so the combined quad pixels wont resemble the original. Without higher details in game the FSAA in high resolution will just smooth the image even more. Actualy it always worked this way even on my gf4800.

SiliconDoc - Friday, September 25, 2009 - link

PS - a good friend of mine has an ATI HD2900 pro with 320 shaders (a strange one not generally listed anywhere) that he bought around the time I was showing him around the egg cards and I bought an ATI card as well.Well, he's a flight simmer, and it has done quite well for him for a about a year, although in his former Dell board it was LOCKED at 500mhz 503mem 2d and 3d, no matter what utility was tried, and the only driver that worked was the CD that came with it.

Next on a 570 sli board he got a bit of relief, an with 35 various installs or so, he could sometimes get 3d clocks to work.

Even with all that, it performed quite admirably for the strange FS9 not being an NVidia card.

Just the other day, on his new P45 triple pci-e slot, after 1-2 months running, another friend suggested he give OCCT a go, and he asked what is good for stability (since he made 1600fsb and an impressive E4500 (conroe) 200x11/2200 stock to a 400x8/ 3200mhz processor overclock for the past week or two). "Ten minutes is ok an hour is great" was the response.

Well, that HD2900pro and OCCT didn't like eachother much either - and DOWN went the system, 30 seconds in, cooking that power supply !

--- LOL ---

Now that just goes to show you that hiding this problem with the 4870 and 4890 for so many months, not a peep here... is just not good for end users...

---

Thanks a lot tight lipped "nothing is wrong with ATI cards" red fans. Just wonderful.

---

He had another PS and everything else is OK

, and to be quite honest and frank and fair as I near always am, that HD2900pro still does pretty darn good for his flight simming ( and a few FPS we all play sometimes) and he has upgraded EVERYTHING else already at least once, so on that card, well done for ATI. (well depsite the drivcer issues, 3d mhz issues, etc)

Oh, and he also has a VERY NICE 'oc on it now(P45 board) - from 600/800 core/mem 3D to 800/1000, and 2d is 500/503, so that's quite impressive.

SiliconDoc - Wednesday, September 30, 2009 - link

Ahh, isn't that typical, the 3 or 4 commenters raving for ati "go dead silent" on this furmark and OCCT issue.--

"It's ok we never mentioned it for a year plus as we guarded our red fan inner child."

(Ed. note: We "heard" nvidia has a similar implementation")

WHATEVER THAT MEANS !

---

I just saw (somewhere else) another red fanatic bloviating about his 5870 only getting up to 76C in Furmark. ROFLMAO

--

Aww, the poor schmuck didn't know the VRM's were getting cut back and it was getting throttled from the new "don't have a heat explosion" tiny wattage crammed hot running ati core.

SiliconDoc - Friday, September 25, 2009 - link

Well you brought to mind another sore spot for me.In the article, when the 4870 and 4890 FAIL furmark and OCCT, we are suddenly told, after this many MONTHS, that a blocking implementation in ati driver version 9.2 was put forth by ATI, so their cards wouldn't blow to bits on furmark and OCCT.

I for one find that ABSOLUTELY AMAZING that this important information took this long to actually surface at red central - or rather, I find it all too expected, given the glaring bias, ever present.

Now, the REASON this information finally came forth, other thanb the epic fail, is BECAUSE the 5870 has a "new technology" and no red fan site can possibly pass up bragging about something new in a red core.

So the brag goes on, how protection bits in the 5870 core THROTTLE BACK the VRM's when heat issues arise, hence allowing for cheap VRM's & or heat dissipation issues to divert disaster.

That's wonderful, we finally find out about ANOTHER 4870 4980 HEAT ISSUE, and driver hack by ATI, and a failure to RUN THE COMMON BENCHMARKS we have all used, this late in the game.

I do have to say, the closed mouthed rabid foam contained red fans are to appluaded for their collective silence over this course of time.

Now, further bias revealed in tha article - the EDITOR makes sure to chime in, and notes ~"we have heard Nvidia has a similar thing".

What exactly that similar thing is, and whom the Editor supposedly heard it from, is never made clear.

Nonetheless, it is a hearty excuse and edit for, and in favor of, EXCUSING ATI's FAILURES.

Nonetheless, ALL the nvidia cards passed all the tests, and were 75% in number to the good cooler than the ATI's that were- ATI all at 88C-90C, but, of course, the review said blandly in a whitewash, blaming BOTH competitors nvidia and ati - "temps were all over the place" (on load).

(The winning card GTX295 @ 90C, and 8800GT noted 92C *at MUCH reduced case heat and power consumption, hence INVALID as comparison)

Although certain to mention the GT8800 at 92C, no mention of the 66C GTX250 or GTX260 winners, just the GTX275 @ 75C, since that was less of a MAJOR EMBARRASSMENT for the ATI heat load monsters !

Now THERE'S A REAL MONSTER - AND THAT'S ALL OF THE ATI CARDS TESTED IN THIS REVIEW ! Not just the winning nvidia, while the others that matter (can beat 5870 in sli or otherwise) hung 24C to 14C lower under load.

So, after all that, we have ANOTHER BIAS, GLARING BIAS - the review states " there are no games we know of, nor any we could find, that cause this 5870 heat VRM throttling to occur".

In other words, we are to believe, it was done in the core, just for Furmark and OCCT, or, that it was done as precaution and would NEVER come into play, yes, rest assured, it just won't HAPPEN in the midst of a heavy firefight when your trigger finger is going 1,000mph...

So, I found that just CLASSIC for this place. Here is another massive heat issue, revealed for the first time for 4870 and 4890 months and months and months late, then the 5870 is given THE GOLDEN EXCUSE and massive pass, as that heat reducing VRM cooling voltage cutting framerate lowering safety feature "just won't kick in on any games".

ROFL

I just can't help it, it's just so dang typical.

DigitalFreak - Friday, September 25, 2009 - link

Looks like SnakeOil has yet ANOTHER account.