AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

Power, Temperature, & Noise

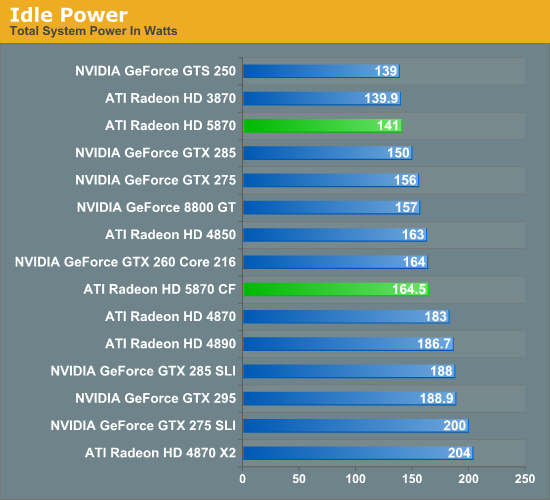

As we have mentioned previously, one of AMD’s big design goals for the 5800 series was to get the idle power load significantly lower than that of the 4800 series. Officially the 4870 does 90W, the 4890 60W, and the 5870 should do 27W.

On our test bench, the idle power load of the system comes in at 141W, a good 42W lower than either the 4870 or 4890. The difference is even more pronounced when compared to the multi-GPU cards that the 5870 competes with performance wise, with the gap opening up to as much as 63W when compared to the 4870X2. In fact the only cards that the 5870 can’t beat are some of the slowest cards we have: the GTS 250 and the Radeon HD 3870.

As for the 5870 CF, we see AMD’s CF-specific power savings in play here. They told us they can get the second card down to 20W, and on our rig the power consumption of adding a second card is 23.5W, which after taking power inefficiencies into account is right on the dot.

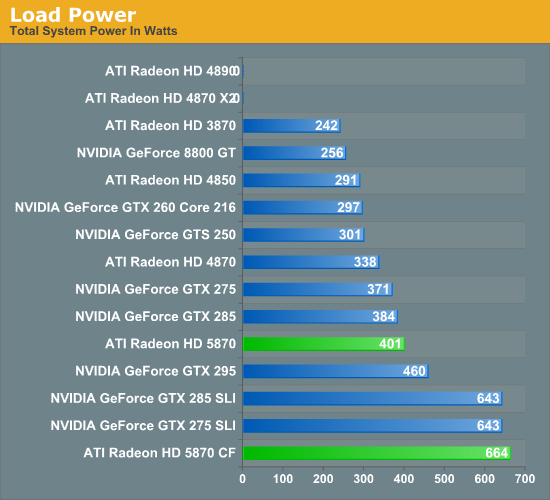

Moving on to load power, we are using the latest version of the OCCT stress testing tool, as we have found that it creates the largest load out of any of the games and programs we have. As we stated in our look at Cypress’ power capabilities, OCCT is being actively throttled by AMD’s drivers on the 4000 and 3000 series hardware. So while this is the largest load we can generate on those cards, it’s not quite the largest load they could ever experience. For the 5000 series, any throttling would be done by the GPU’s own sensors, and only if the VRMs start to overload.

In spite of AMD’s throttling of the 4000 series, right off the bat we have two failures. Our 4870X2 and 4890 both crash the moment OCCT starts. If you ever wanted proof as to why AMD needed to move to hardware based overcurrent protection, you will get no better example of that than here.

For the cards that don’t fail the test, the 5870 ends up being the most power-hungry single-GPU card, at 401W total system power. This puts it slightly ahead of the GTX 285, and well, well behind any of the dual-GPU cards or configurations we are testing. Meanwhile the 5870 CF takes the cake, beating every other configuration for a load power of 664W. If we haven’t mentioned this already we will now: if you want to run multiple 5870s, you’re going to need a good power supply.

Ultimately with the throttling of OCCT it’s difficult to make accurate predictions about all possible cases. But from our tests with it, it looks like it’s fair to say that the 5870 has the capability to be a slightly bigger power hog than any previous single-GPU card.

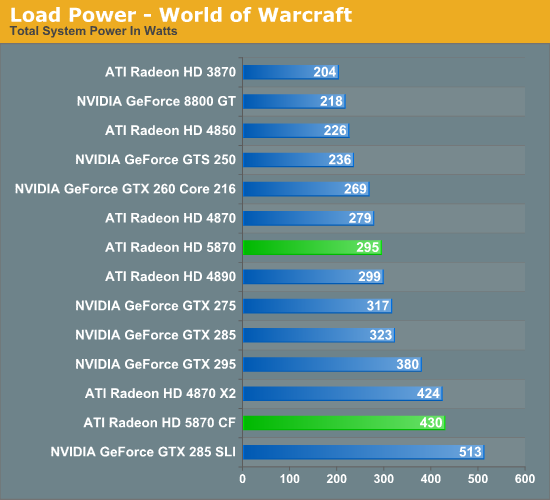

In light of our results with OCCT, we have also taken load power results for our suite of cards when running World of Warcraft. As it’s not a stress-tester it should produce results more in line with what power consumption will look like with a regular game.

Right off the bat, system power consumption is significantly lower. The biggest power hogs are the are the GTX 285 and GTX 285 SLI for single and dual-GPU configurations respectively. The bulk of the lineup is the same in terms of what cards consume more power, but the 5870 has moved down the ladder, coming in behind the GTX 275 and ahead of the 4870.

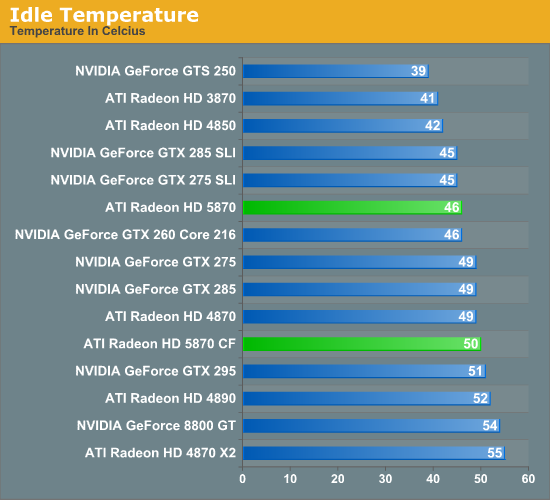

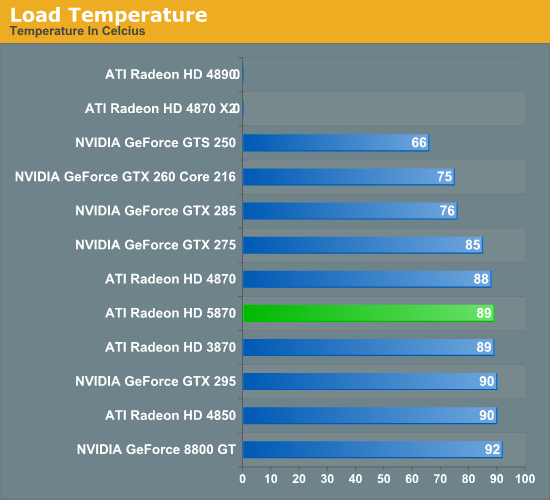

Next up we have card temperatures, measured using the on-board sensors of the card. With a good cooler, lower idle power consumption should lead to lower idle temperatures.

The floor for a good cooler looks to be about 40C, with the GTS 250, 3870, and 4850 all turning in temperatures around here. For the 5870, it comes in at 46C, which is enough to beat the 4870 and the NVIDIA GTX lineup.

Unlike power consumption, load temperatures are all over the place. All of the AMD cards approach 90C, while NVIDIA’s cards are between 92C for an old 8800GT, and a relatively chilly 75C for the GTX 260. As far as the 5870 is concerned, this is solid proof that the half-slot exhaust vent isn’t going to cause any issues with cooling.

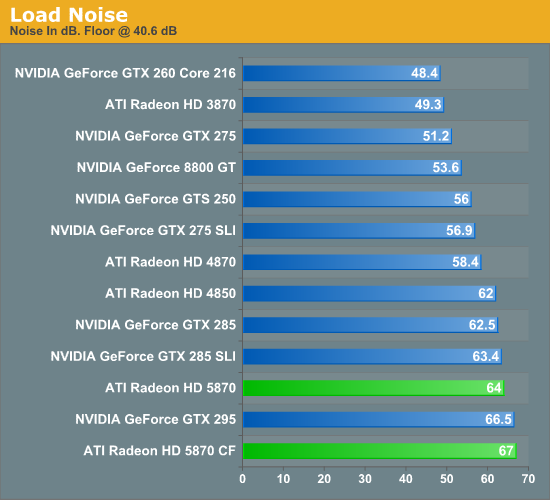

Finally we have fan noise, as measured 6” from the card. The noise floor for our setup is 40.4 dB.

All of the cards, save the GTX 295, generate practically the same amount of noise when idling. Given the lower energy consumption of the 5870 when idling, we had been expecting it to end up a bit quieter, but this was not to be.

At load, the picture changes entirely. The more powerful the card the louder it tends to get, and the 5870 is no exception. At 64 dB it’s louder than everything other than the GTX 295 and a pair of 5870s. Hopefully this is something that the card manufacturers can improve on later on with custom coolers, as while 64 dB at 6" is not egregious it’s still an unwelcome increase in fan noise.

327 Comments

View All Comments

mapesdhs - Saturday, September 26, 2009 - link

> That is quite all right, you fellas make sure to read it all, ...

But that's the thing S.D., I pretty much don't read any of it. :D (does

anyone?) First sentence only, then move on.

Ian.

SiliconDoc - Monday, September 28, 2009 - link

Oh, ha ha, another lowlife smart aleck.One has to wonder if you do as you say, and only read the first sentence, and move on, why you would care what I've typed, since you cannot imagine anyone does anything different. Heck you shouldn't even notice this, right liar ?

Yes, another liar, not amazing, not at all.

No need to modify or delete the sentence prior to this JaredWalton, smarty pants insulter won't read it, but I'm sure you can't resist, for "convenience's" sake of course.

Oh, I don't have to bring anything up on topic at all, because neither did lowlife skum not reading, he just got his nose awfully browner.

JarredWalton - Friday, September 25, 2009 - link

Very happy to have everyone here convinced you don't know what you're talking about? That's the only "truth" you've brought to this party. Marketing generally wants reputable people to promote a product - the "every man" approach. Funny that we don't see crazy people espousing products on TV (well, excepting stuff like Sham Wow!)Being crazy like you are in this thread only cements your status as someone who doesn't have a firm grip on reality - someone that can't be trusted. Thanks again for clearing that up so thoroughly.

I am very happy about it as well! :-D

erple2 - Tuesday, September 29, 2009 - link

Yeah, but that "Sham Wow" product works like a freakin' charm...http://www.popularmechanics.com/blogs/home_journal...">http://www.popularmechanics.com/blogs/home_journal...

SiliconDoc - Wednesday, September 30, 2009 - link

Im' sure you spend your time drooling in front of a TV after you spank your joystick for fps, so know all about wacky commercials you have memorized, and besides, it's a pathetic, all you have left insult, off topic, who cares, pure hatred, no real response, and the 5870 double epic fail IS THE HOTTEST ATI CARD OF ALL TIME!erple2 - Wednesday, September 30, 2009 - link

What's with the personal attacks? Does that mean that you concede defeat?Meh, you've no more credibility. Chill out.

SiliconDoc - Friday, September 25, 2009 - link

Yeah, now down to insults, since you lost everything else.Let's have your claimed specialty outlined here in context, let's have you come clean on LAPTOP GRAPHICS, and spread the truth about how NVIDIA is so far ahead and has been for quite some time, that it's a JOKE to buy a gaming laptop with ATI graphics on board.

Come on mmister!

--

Now that is REALLY FUNNY ! You grabbed your arrogant unscrupulous self and proclaimed your fairness, but picked a spot where ati is completely EPIC FAIL, and NVIDIA is 1000% the only way to go, PERIOD, and left that MAJOR slap in the face high and dry.

--

Great job, yeah, you're the "sane one".

LOL

dieselcat18 - Saturday, October 3, 2009 - link

Nvidia fan-boy, troll, loser....take your gforce cards and go home...we can now all see how terrible ATi is thanks you ...so I really don't understand why people are beating down their doors for the 5800 series, just like people did for the 4800 and 3800 cards. I guess Nvidia fan-boy trolls like you have only one thing left to do and that's complain and cry like the itty-bitty babies that some of you are about the competition that's beating you like a drum.....so you just wait for your 300 series cards to be released (can't wait to see how many of those are available) so you can pay the overpriced premiums that Nvidia will be charging AGAIN !...hahaha...just like all that re-badging BS they pulled with the 9800 and 200 cards...what a joke !.. Oh my, I must say you have me in a mood and the ironic thing is I do like Nvidia as much as ATi, I currently own and use both. I just can't stand fools like you who spout nothing but mindless crap while waving your team flag (my card is better than your's..WhaaWhaaWhaa)...just take yourself along with your worthless opinions and slide back under that slimly rock you came from.JarredWalton - Friday, September 25, 2009 - link

You've been insulting in this whole thread, so don't go crying to mamma about someone pointing that out. I did go and delete the posts from the person calling you gay and suggesting you should die in various ways, because as bad as you've been you haven't stooped quite that low (yet).Laptop issues with ATI... you mean http://www.anandtech.com/mobile/showdoc.aspx?i=356...">like this. Granted, I gave them a chance to address the issues. They failed and my full article on the various Clevo high-end notebooks will make it quite clear how far ahead NVIDIA is in the mobile sector right now.

"Fair" is treating both sides objectively. ATI has major problems with getting updated graphics drivers out on mobile products, and that's horrible. On the desktop, they don't have such issues for the most part. Yeah, you might have to wait a month or so for a driver update to fix the latest hot release and add CrossFire support... but you have to do the exact same thing for NVIDIA with about the same frequency. Only SLI and CF setups really need the regular driver updates, and in many cases the latest 18x and 19x NVIDIA drivers are slower than 16x and 17x on games that are older than six months.

Fair is also looking at these results and saying, "gee, I can get a 5870 for $400 (or $360 if you wait a few weeks for supply to bolster up), and that same card has no CrossFire or SLI wonkiness and costs less than the GTX 295 and 4870X2. Okay, 4870X2 and GTX 295 beat it in raw performance in some cases, but I don't think there's a single game where you can say one HD 5870 offers less than acceptable performance at 2560x1600, and I can guarantee there are titles that still have issues with SLI and CrossFire. (Yeah, you need to turn down some details in Crysis to get acceptable performance, but that's true of anything other than the top SLI and CF configs.) I would be more than happy to give up a bit of performance to avoid dealing with the whole multi-GPU ordeal. Why don't you tell us how innovative and awesome tri-SLI and quad-SLI are while you're at it?

At present, you have contributed more than 20% of the comments to this article, and not a single one has been anything but trolling. Screaming and yelling, insulting others, lying and making stuff up, all in support of a company that is just like any other big company. We don't ban accounts often, but you've more than warranted such action.

SiliconDoc - Friday, September 25, 2009 - link

I think it is more than absolutely clear, that in fact, I said my peace, my first post, and was absolutely attacked. I didn't attack, I got attacked, and in fact you have done plenty of attacking as well.I have also provided links, to back up my assertions and counter arguments, added the text for easy viewing, and pointed out in very specific detail whay issues with bias I had and why.

--

Now you've claimed "all I've done is post FUD".

It is nothing short of amazing for you to even posit that, however I can certainly understand anyone pointing out the obvious bias problems (in the article no less) is "on thin ice", and after getting attacked, is solely blamed for "no facts".

---

I certainly won't disagree that the 5870 is a good value as appearing if especially if you don't like to deal with 2 cards or 2 cores.

But my posts never claimed otherwise. I first claimed it was not as good as wanted, was disappointing, and therefore was not the end of what ati had in store.

Since I have posted on the 5890, which will in fact be 512 bit.

Now, you don't like losing your points, or someone adept enough, smart enough, and accurate enough to counter them.

Sorry about that, and sorry that I won't just lay down, as more heaps are shovelled my way.

You skip my actual points, and go some other tangent.

1. PhysX is an advantage and best implementation so far.

your response: "It sucks because only 2 games ar available"

---

Is that correct for you to do ? Is it not the very best so far ? Yes, it is in fact.

I have remained factual and reasonable, and glad enough to throw back when I'm attacked.

But the fact remains, I have made absolutely solid 100% poijnts no matter how many times you claim " lying and making stuff up, "

---

Yet of course, what I just said about NVIDIA and laptp chips, you agreed with. So accoring to your own characterization (quite unfair), all you do is scream and lie, too.

Just wonderful.

The GT300 is going to blow this 5870 away - the stats themselves show it, and betting otherwise is a bad joke, and if sense is still about, you know it as well.