AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

Lower Idle Power & Better Overcurrent Protection

One aspect AMD was specifically looking to improve in Cypress over RV770 was idle power usage. The load power usage for RV770 was fine at 160W for the HD4870, but that power usage wasn’t dropping by a great deal when idle – it fell by less than half to 90W. Later BIOS revisions managed to knock a few more watts off of this, but it wasn’t a significant change, and even later designs like RV790 still had limits to their idling abilities by only being able to go down to 60W at idle.

As a consequence, AMD went about designing the Cypress with a much, much lower target in mind. Their goal was to get idle power down to 30W, 1/3rd that of RV770. What they got was even better: they came in past that target by 10%, hitting a final idle power of 27W. As a result the Cypress can idle at 30% of the power as RV770, or as compared to Cypress’s load power of 188W, some 14% of its load power.

Accomplishing this kind of dramatic reduction in idle power usage required several changes. Key among them has been the installation of additional power regulating circuitry on the board, and additional die space on Cypress assigned to power regulation. Notably, all of these changes were accomplished without the use of power-gating to shut down unused portions of the chip, something that’s common on CPUs. Instead all of these changes have been achieved through more exhaustive clock-gating (that is, reducing power consumption by reducing clock speeds), something GPUs have been doing for some time now.

The use of clock-gating is quickly evident when we discuss the idle/2D clock speeds of the 5870, which is 150mhz for the core, and 300mhz for the memory . The idle clock speeds here are significantly lower than the 4870 (550/900), which in the case of the core is the source of its power savings as compared to the 4870. As tweakers who have attempted to manually reduce the idle clocks on RV770 based cards for further power savings have noticed, RV770 actually loses stability in most situations if its core clock drops too low. With the Cypress this has been rectified, enabling it to hit these lower core speeds.

Even bigger however are the enhancements to Cypress’s memory controller, which allow it to utilize a number of power-saving tricks with GDDR5 RAM, along with other features that we’ll get to in a bit. With RV770’s memory controller, it was not capable of taking advantage of very many of GDDR5’s advanced features besides the higher bandwidth abilities. Lacking this full bag of tricks, RV770 and its derivatives were unable to reduce the memory clock speed, which is why the 4870 and other products had such high memory clock speeds even at idle. In turn this limited the reduction in power consumption attained by idling GDDR5 modules.

With Cypress AMD has implemented nearly the entire suite of GDDR5’s power saving features, allowing them to reduce the power usage of the memory controller and the GDDR5 modules themselves. As with the improvements to the core clock, key among the improvement in memory power usage is the ability to go to much lower memory clock speeds, using fast GDDR5 link re-training to quickly switch the memory clock speed and voltage without inducing glitches. AMD is also now using GDDR5’s low power strobe mode, which in turn allows the memory controller to save power by turning off the clock data recovery mechanism. When discussing the matter with AMD, they compared these changes to putting the memory modules and memory controller into a GDDR3-like mode, which is a fair description of how GDDR5 behaves when its high-speed features are not enabled.

Finally, AMD was able to find yet more power savings for Crossfire configurations, and as a result the slave card(s) in a Crossfire configuration can use even less power. The value given to us for an idling slave card is 20W, which is a product of the fact that the slave cards go completely unused when the system is idling. In this state slave cards are still capable of instantaneously ramping up for full-load use, although conceivably AMD could go even lower still by powering down the slave cards entirely at a cost of this ability.

On the opposite side of the ability to achieve such low idle power usage is the need to manage load power usage, which was also overhauled for the Cypress. As a reminder, TDP is not an absolute maximum, rather it’s a maximum based on what’s believed to be the highest reasonable load the card will ever experience. As a result it’s possible in extreme circumstances for the card to need power beyond what its TDP is rated for, which is a problem.

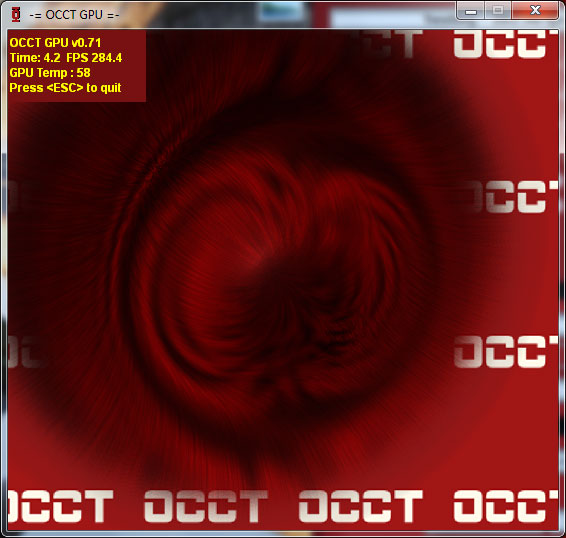

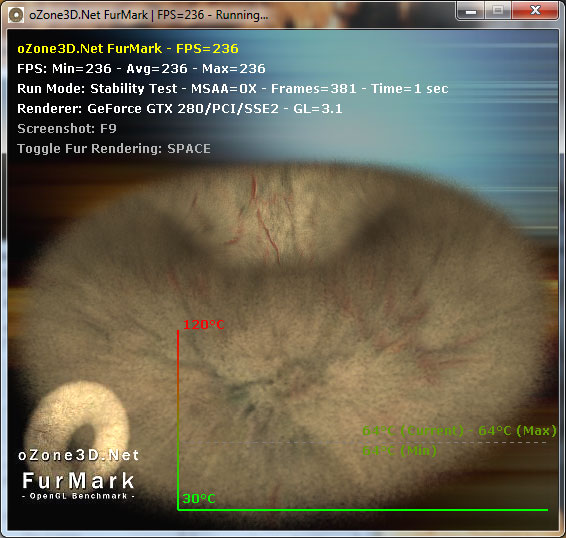

That problem reared its head a lot for the RV770 in particular, with the rise in popularity of stress testing programs like FurMark and OCCT. Although stress testers on the CPU side are nothing new, FurMark and OCCT heralded a new generation of GPU stress testers that were extremely effective in generating a maximum load. Unfortunately for RV770, the maximum possible load and the TDP are pretty far apart, which becomes a problem since the VRMs used in a card only need to be spec’d to meet the TDP of a card plus some safety room. They don’t need to be able to meet whatever the true maximum load of a card can be, as it should never happen.

Why is this? AMD believes that the instruction streams generated by OCCT and FurMark are entirely unrealistic. They try to hit everything at once, and this is something that they don’t believe a game or even a GPGPU application would ever do. For this reason these programs are held in low regard by AMD, and in our discussions with them they referred to them as “power viruses”, a term that’s normally associated with malware. We don’t agree with the terminology, but in our testing we can’t disagree with AMD about the realism of their load – we can’t find anything that generates the same kind of loads as OCCT and FurMark.

Regardless of what AMD wants to call these stress testers, there was a real problem when they were run on RV770. The overcurrent situation they created was too much for the VRMs on many cards, and as a failsafe these cards would shut down to protect the VRMs. At a user level shutting down like this isn’t a very helpful failsafe mode. At a hardware level shutting down like this isn’t enough to protect the VRMs in all situations. Ultimately these programs were capable of permanently damaging RV770 cards, and AMD needed to do something about it. For RV770 they could use the drivers to throttle these programs; until Catalyst 9.8 they detected the program by name, and since 9.8 they detect the ratio of texture to ALU instructions (Ed: We’re told NVIDIA throttles similarly, but we don’t have a good control for testing this statement). This keeps RV770 safe, but it wasn’t good enough. It’s a hardware problem, the solution needs to be in hardware, particularly if anyone really did write a power virus in the future that the drivers couldn’t stop, in an attempt to break cards on a wide scale.

This brings us to Cypress. For Cypress, AMD has implemented a hardware solution to the VRM problem, by dedicating a very small portion of Cypress’s die to a monitoring chip. In this case the job of the monitor is to continually monitor the VRMs for dangerous conditions. Should the VRMs end up in a critical state, the monitor will immediately throttle back the card by one PowerPlay level. The card will continue operating at this level until the VRMs are back to safe levels, at which point the monitor will allow the card to go back to the requested performance level. In the case of a stressful program, this can continue to go back and forth as the VRMs permit.

By implementing this at the hardware level, Cypress cards are fully protected against all possible overcurrent situations, so that it’s not possible for any program (OCCT, FurMark, or otherwise) to damage the hardware by generating too high of a load. This also means that the protections at the driver level are not needed, and we’ve confirmed with AMD that the 5870 is allowed to run to the point where it maxes out or where overcurrent protection kicks in.

On that note, because card manufacturers can use different VRMs, it’s very likely that we’re going to see some separation in performance on FurMark and OCCT based on the quality of the VRMs. The cheapest cards with the cheapest VRMs will need to throttle the most, while luxury cards with better VRMs would need to throttle little, if at all. This should make little difference in stock performance on real games and applications (since as we covered earlier, we can’t find anything that pushes a card to excess) but it will likely make itself apparent in overclocking. Overclocked cards - particularly those with voltage modifications – may hit throttle situations in normal applications, which means the VRMs will make a difference here. It also means that overclockers need to keep an eye on clock speeds, as the card shutting down is no longer a tell-tale sign that you’re pushing it too hard.

Finally, while we’re discussing the monitoring chip, we may as well talk about the rest of its features. Along with monitoring the GPU, it also is a PWM controller. This means that the PWM controller is no longer a separate part that card builders add themselves, and as such we won’t be seeing any cards using a 2pin fixed speed fan to save money on the PWM controller. All Cypress cards (and presumably, all derivatives) will have the ability to use a 4pin fan built-in.

327 Comments

View All Comments

SiliconDoc - Monday, September 28, 2009 - link

When the GTX295 still beats the latest ati card, your wish probably won't come true. Not only that, ati's own 4870x2 just recently here promoted as the best value, is a slap in it's face.It's rather difficult to believe all those crossfire promoting red ravers suddenly getting a different religion...

Then we have the no DX11 released yet, and the big, big problem...

NO 5870'S IN THE CHANNELS, reports are it's runs hot and the drivers are beta problematic.

---

So, celebrating a red revolution of market share - is only your smart aleck fantasy for now.

LOL - Awwww...

silverblue - Monday, September 28, 2009 - link

It's nearly as fast as a dual GPU solution. I'd say that was impressive.DirectX 11 comes out in less than a month... hardly a wait. It's not as if the card won't do DX9/10.

Hot card? Designed to be that way. If it was a real issue they'd have made the exhaust larger.

Beta problematic drivers? Most ATI launches seem to go that way. They'll be fixed soon enough.

SiliconDoc - Monday, September 28, 2009 - link

Gee, I thought the red rooster said nvidia sales will be low for a while, and I pointed out why they won't be, and you, well you just couldn'r handle that.I'd say a 60.96% increase in a nex gen gpu is "impressive", and that's what Nvidia did just this last time with GT200.

http://www.anandtech.com/video/showdoc.aspx?i=3334...">http://www.anandtech.com/video/showdoc.aspx?i=3334...

--

BTW - the 4870 to 4890 move had an additional 3M core transistors, and we were told by you and yours that was not a "rebrand".

BUT - the G80 move to G92 added 73M core transistors, and you couldn't stop shrieking "rebrand".

---

nearly as fast= second best

DX11 in a month = not now and too early

hot card -= IT'S OK JUST CLAIM ATI PLANNED ON IT BEING HOT !ROFL, IT'S OK TO LIE ABOUT IT IN REVIEWS, TOO ! COCKA DOODLE DOOO!

beta drivers = ALL ATI LAUNCHES GO THAT WAY, NOT "MOST"

----

Now, you can tell smart aleck this is a paper launch like the 4870, the 4770, and now this 5870 and no 5850, becuase....

"YOU'LL PUT YOUR HEAD IN THE SAND AND SCREAM IN CAPS BECAUSE THAT'S HOW YOU ROLL IN RED ROOSERVILLE ! "

(thanks for the composition Jared, it looks just as good here as when you add it to my posts, for "convenience" of course)

ClownPuncher - Monday, September 28, 2009 - link

It would be awesome if you were to stop posting altogether.SiliconDoc - Monday, September 28, 2009 - link

It would be awesome if this 5870 was 60.96% better than the last ati card, but it isn't.JarredWalton - Monday, September 28, 2009 - link

But the 5870 *is* up to 65% faster than the 4890 in the tested games. If you were to compare the GTX 280 to the 9800 GX2, it also wasn't 60% faster. In fact, 9800 GX2 beat the GTX 280 in four out of seven tested games, tied it in one, and only trailed in two games: Enemy Territory (by 13%) and Oblivion (by 3%), making ETQW the only substantial win for the GT200.So we're biased while you're the beacon of impartiality, I suppose, since you didn't intentionally make a comparison similar to comparing apples with cantaloupes. Comparing ATI's new card to their last dual-GPU solution is the way to go, but NVIDIA gets special treatment and we only compare it with their single GPU solution.

If you want the full numbers:

1) On average, the 5870 is 30% faster than the 4890 at 1680x1050, 35% faster at 1920x1200, and 45% faster at 2560x1600.

2) Note that the margin goes *up* as resolution increases, indicating the major bottleneck is not memory bandwidth at anything but 2560x1600 on the 5870.

3) Based on the old article you linked, GTX 280 was on average 5% slower than 9800X2 and 59% faster than the 9800 GTX - the 9800X2 was 6.4% faster than the GTX 280 in the tested titles.

4) Making the same comparisons, 5870 is only 3.4% faster than the 4870X2 in the tested games and 45% faster than the 4890HD.

Now, the games used for testing are completely different, so we have to throw that out. DoW2 is a huge bonus in favor of the 5870 and it scales relatively poorly with CF, hurting the X2. But you're still trying to paint a picture of the 5870 as a terrible disappointment when in fact we could say it essentially equals what NVIDIA did with the GTX 280.

On average, at 2560x1600, if NVIDIA's GT300 were to come out and be 60% faster than the GTX 285, it will beat ATI's 5870 by about 15%. If it's the same price, it's the clear choice... if you're willing to wait a month or two. That's several "ifs" for what amounts to splitting hairs. There is no current game that won't run well on the HD 5870 at 2560x1600, and I suspect that will hold true of the GT300 as well.

(FWIW, Crysis: Warhead is as bad as it gets, and dropping 4xAA will boost performance by at least 25%. It's an outlier, just like Crysis, since the higher settings are too much for anything but the fastest hardware. "High" settings are more than sufficient.)

SiliconDoc - Tuesday, September 29, 2009 - link

In other words, even with your best fudging and whining about games and all the rest, you can't even bring it with all the lies from the 15-30 percent people are claiming up to 60.96%--

Yes, as I thought.

zshift - Thursday, September 24, 2009 - link

My thoughts exactly ;)I knew the 5870 was gonna be great based on the design philosophy that AMD/ATi had with the 4870, but I never thought I'd see anything this impressive. LESS power, with MORE power! (pun intended), and DOUBLE the speed, at that!

Funny thing is, I was actually considering an Nvidia gpu when I saw how impressive PhysX was on Batman AA. But I think I would rather have near double the frame rates compared to seeing extra paper fluffing around here and there (though the scenes with the scarecrow are downright amazing). I'll just have to wait and see how the GT300 series does, seeing as I can't afford any of this right now (but boy, oh boy, is that upgrade bug itching like it never has before).

SiliconDoc - Thursday, September 24, 2009 - link

Fine, but performance per dollar is on the very low end, often the lowest of all the cards. That's why it was omitted here.http://www.techpowerup.com/reviews/ATI/Radeon_HD_5...">http://www.techpowerup.com/reviews/ATI/Radeon_HD_5...

THE LOWEST overall, or darn near it.

erple2 - Friday, September 25, 2009 - link

So what you're saying then is that everyone should buy the 9500 GT and ignore everything else? If that's the most important thing to you, then clearly, that's what you mean.I think that the performance per dollar metrics that are shown are misleading at best and terrible at worst. It does not take into account that any frame rates significantly above your monitor refresh are for all intents and purposes wasted, and any frame rates significantly below 30 should by heavily weighted negatively. I haven't seen how techpowerup does their "performance per dollar" or how (if at all) they weight the FPS numbers in the dollar category.

SLI/Crossfire has always been a lose-lose in the "performance per dollar" category. Curiously, I don't see any of the nvidia SLI cards listed (other than the 295).

That sounds like biased "reporting" on your part.