AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

Eyefinity

Somewhere around 2006 - 2007 ATI was working on the overall specifications for what would eventually turn into the RV870 GPU. These GPUs are designed by combining the views of ATI's engineers with the demands of the developers, end-users and OEMs. In the case of Eyefinity, the initial demand came directly from the OEMs.

ATI was working on the mobile version of its RV870 architecture and realized that it had a number of DisplayPort (DP) outputs at the request of OEMs. The OEMs wanted up to six DP outputs from the GPU, but with only two active at a time. The six came from two for internal panel use (if an OEM wanted to do a dual-monitor notebook, which has happened since), two for external outputs (one DP and one DVI/VGA/HDMI for example), and two for passing through to a docking station. Again, only two had to be active at once so the GPU only had six sets of DP lanes but the display engines to drive two simultaneously.

ATI looked at the effort required to enable all six outputs at the same time and made it so, thus the RV870 GPU can output to a maximum of six displays at the same time. Not all cards support this as you first need to have the requisite number of display outputs on the card itself. The standard Radeon HD 5870 can drive three outputs simultaneously: any combination of the DVI and HDMI ports for up to 2 monitors, and a DisplayPort output independent of DVI/HDMI. Later this year you'll see a version of the card with six mini-DisplayPort outputs for driving six monitors.

It's not just hardware, there's a software component as well. The Radeon HD 5000 series driver allows you to combine all of these display outputs into one single large surface, visible to Windows and your games as a single display with tremendous resolution.

I set up a group of three Dell 24" displays (U2410s). This isn't exactly what Eyefinity was designed for since each display costs $600, but the point is that you could group three $200 1920 x 1080 panels together and potentially have a more immersive gaming experience (for less money) than a single 30" panel.

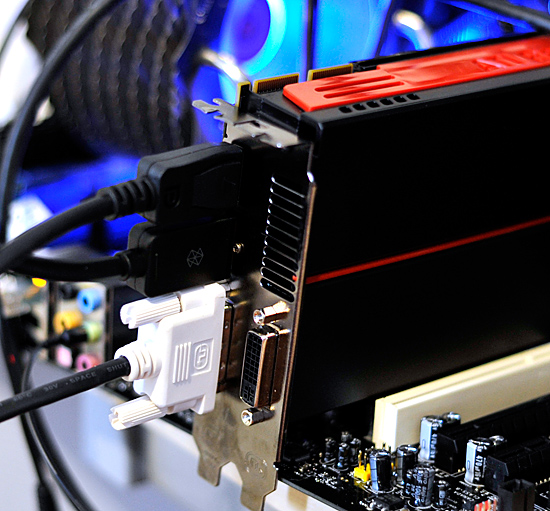

For our Eyefinity tests I chose to use every single type of output on the card, that's one DVI, one HDMI and one DisplayPort:

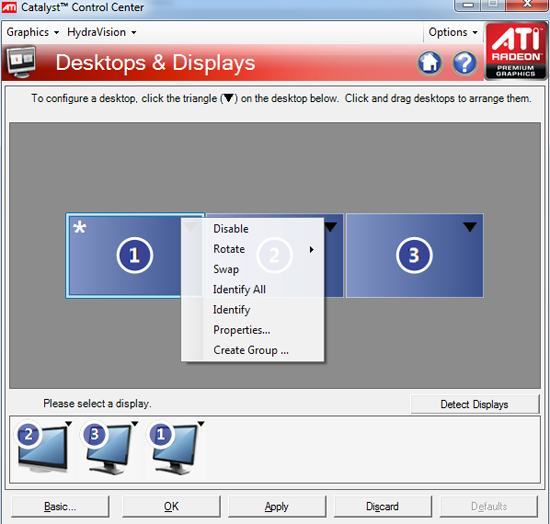

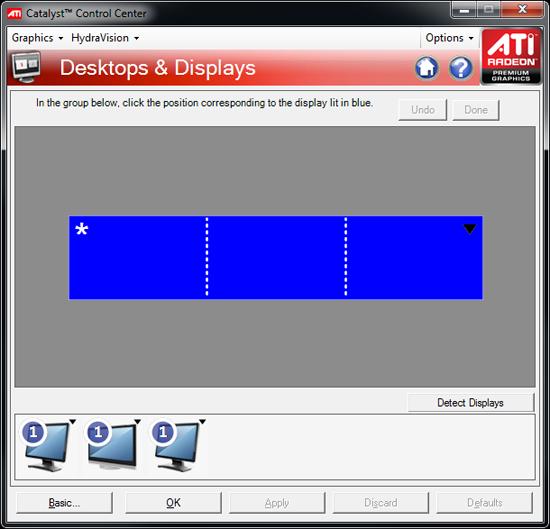

With all three outputs connected, Windows defaults to cloning the display across all monitors. Going into ATI's Catalyst Control Center lets you configure your Eyefinity groups:

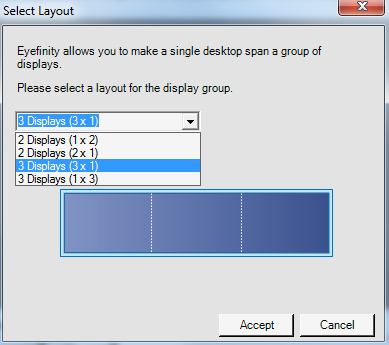

With three displays connected I could create a single 1x3 or 3x1 arrangement of displays. I also had the ability to rotate the displays first so they were in portrait mode.

You can create smaller groups, although the ability to do so disappeared after I created my first Eyefinity setup (even after deleting it and trying to recreate it). Once you've selected the type of Eyefinity display you'd like to create, the driver will make a guess as to the arrangement of your panels.

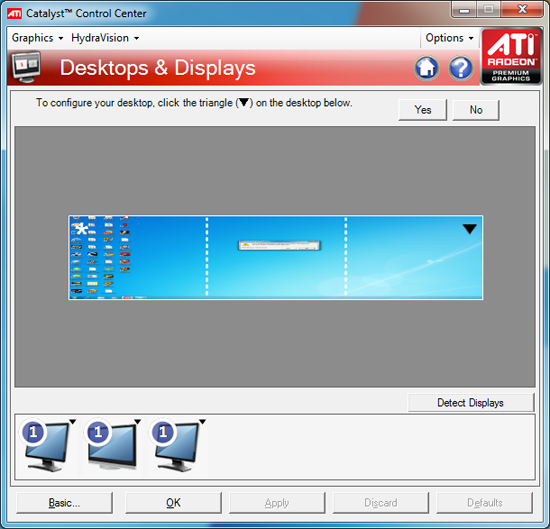

If it guessed correctly, just click Yes and you're good to go. Otherwise ATI has a handy way of determining the location of your monitors:

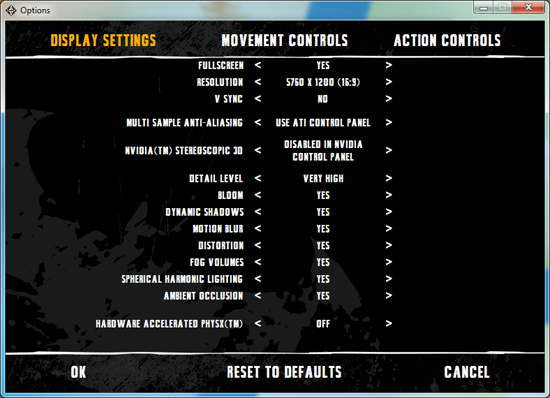

With the software side taken care of, you now have a Single Large Surface as ATI likes to call it. The display appears as one contiguous panel with a ridiculous resolution to the OS and all applications/games:

Three 24" panels in a row give us 5760 x 1200

The screenshot above should clue you into the first problem with an Eyefinity setup: aspect ratio. While the Windows desktop simply expands to provide you with more screen real estate, some games may not increase how much you can see - they may just stretch the viewport to fill all of the horizontal resolution. The resolution is correctly listed in Batman Arkham Asylum, but the aspect ratio is not (5760:1200 !~ 16:9). In these situations my Eyefinity setup made me feel downright sick; the weird stretching of characters as they moved towards the outer edges of my vision left me feeling ill.

Dispite Oblivion's support for ultra wide aspect ratio gaming, by default the game stretches to occupy all horizontal resolution

Other games have their own quirks. Resident Evil 5 correctly identified the resolution but appeared to maintain a 16:9 aspect ratio without stretching. In other words, while my display was only 1200 pixels high, the game rendered as if it were 3240 pixels high and only fit what it could onto my screens. This resulted in unusable menus and a game that wasn't actually playable once you got into it.

Games with pre-rendered cutscenes generally don't mesh well with Eyefinity either. In fact, anything that's not rendered on the fly tends to only occupy the middle portion of the screens. Game menus are a perfect example of this:

There are other issues with Eyefinity that go beyond just properly taking advantage of the resolution. While the three-monitor setup pictured above is great for games, it's not ideal in Windows. You'd want your main screen to be the one in the center, however since it's a single large display your start menu would actually appear on the leftmost panel. The same applies to games that have a HUD located in the lower left or lower right corners of the display. In Oblivion your health, magic and endurance bars all appear in the lower left, which in the case above means that the far left corner of the left panel is where you have to look for your vitals. Given that each panel is nearly two feet wide, that's a pretty far distance to look.

The biggest issue that everyone worried about was bezel thickness hurting the experience. To be honest, bezel thickness was only an issue for me when I oriented the monitors in portrait mode. Sitting close to an array of wide enough panels, the bezel thickness isn't that big of a deal. Which brings me to the next point: immersion.

The game that sold me on Eyefinity was actually one that I don't play: World of Warcraft. The game handled the ultra wide resolution perfectly, it didn't stretch any content, it just expanded my viewport. With the left and right displays tilted inwards slightly, WoW was more immersive. It's not so much that I could see what was going on around me, but that whenever I moved forward I I had the game world in more of my peripheral vision than I usually do. Running through a field felt more like running through a field, since there was more field in my vision. It's the only example where I actually felt like this was the first step towards the holy grail of creating the Holodeck. The effect was pretty impressive, although costly given that I only really attained it in a single game.

Before using Eyefinity for myself I thought I would hate the bezel thickness of the Dell U2410 monitors and I felt that the experience wouldn't be any more engaging. I was wrong on both counts, but I was also wrong to assume that all games would just work perfectly. Out of the four that I tried, only WoW worked flawlessly - the rest either had issues rendering at the unusually wide resolution or simply stretched the content and didn't give me as much additional viewspace to really make the feature useful. Will this all change given that in six months ATI's entire graphics lineup will support three displays? I'd say that's more than likely. The last company to attempt something similar was Matrox and it unfortunately didn't have the graphics horsepower to back it up.

The Radeon HD 5870 itself is fast enough to render many games at 5760 x 1200 even at full detail settings. I managed 48 fps in World of Warcraft and a staggering 66 fps in Batman Arkham Asylum without AA enabled. It's absolutely playable.

327 Comments

View All Comments

SiliconDoc - Thursday, September 24, 2009 - link

Are you seriously going to claim that all ATI are not generally hotter than the nvidia cards ? I don't think you really want to do that, no matter how much you wail about fan speeds.The numbers have been here for a long time and they are all over the net.

When you have a smaller die cranking out the same framerate/video, there is simply no getting around it.

You talked about the 295, as it really is the only nvidia that compares to the ati card in this review in terms of load temp, PERIOD.

In any other sense, the GT8800 would be laughed off the pages comparing it to the 5870.

Furthermore, one merely needs to look at the WATTAGE of the cards, and that is more than a plenty accurate measuring stick for heat on load, divided by surface area of the core.

No, I'm not the one not thinking, I'm not the one TROLLING, the TROLLING is in the ARTICLE, and the YEAR plus of covering up LIES we've had concerning this very issue.

Nvidia cards run cooler, ati cards run hotter, PERIOD.

You people want it in every direction, with every lying whine for your red god, so pick one or the other:

1.The core sizes are equivalent, or 2. the giant expensive dies of nvidia run cooler compared to the "efficient" "new technology" "packing the data in" smaller, tiny, cheap, profit margin producing ATI cores.

------

NOW, it doesn't matter what lies or spin you place upon the facts, the truth is absolutely apparent, and you WON'T be changing the physical laws of the universe with your whining spin for ati, and neither will the trolling in the article. I'm going to stick my head in the sand and SCREAM LOUDLY because I CAN'T HANDLE anyone with a lick of intelligence NOT AGREEING WITH ME! I LOVE TO LIE AND TYPE IN CAPS BECAUSE THAT'S HOW WE ROLL IN ILLINOIS!

SiliconDoc - Friday, September 25, 2009 - link

Well that is amazing, now a mod or site master has edited my text.Wow.

erple2 - Friday, September 25, 2009 - link

This just gets better and better...Ultimately, the true measure of how much waste heat a card generates will have to look at the power draw of the card, tempered with the output work that it's doing (aka FPS in whatever benchmark you're looking at). Since I haven't seen that kind of comparison, it's impossible to say anything at all about the relative heat output of any card. So your conclusions are simply biased towards what you think is important (and that should be abundantly clear).

Given that one must look at the performance per watt. Since the only wattage figures we have are for OCCT or WoW playing, so that's all the conclusions one can make from this article. Since I didn't see the results from the OCCT test (in a nice, convenient FPS measure), we get the following:

5870: 73 fps at 295 watts = 247 FPS per milliwatt

275: 44.3 fps at 317 watts = 140 FPS per milliwatt

285: 45.7 fps at 323 watts = 137 FPS per milliwatt

295: 68.9 fps at 380 watts = 181 FPS per milliwatt

That means that the 5870 wins by at least 36% over the other 3 cards. That means that for this observation, the 5870 is, in fact, the most efficient of these cards. It therefore generates less heat than the other 3 cards. Looking at the temperatures of the cards, that strictly measures the efficiency of the cooler, not the efficiency of the actual card itself.

You can say that you think that I'm biased, but ultimately, that's the data I have to go on, and therefore that's the conclusions that can be made. Unfortunately, there's nothing in your post (or more or less all of your posts) that can be verified by any of the information gleaned from the article, and therefore, your conclusions are simply biased speculation.

SiliconDoc - Saturday, September 26, 2009 - link

4780, 55nm, 256mm die, 150watts HOTG260, 55nm, 576mm die, 171watts COLD

3870, 55nm, 192mm die, 106watts HOT

That's all the further I should have to go.

3870 has THE LOWEST LOAD POWER USEAGE ON THE CHARTS

- but it is still 90C, at the very peak of heat,

because it has THE TINIEST CORE !

THE SMALLEST CORE IN THE WHOLE DANG BEJEEBER ARTICLE !

It also has the lowest framerate - so there goes that erple theory.

---

The anomlies you will notice if you look, are due to nm size, memory amount on board (less electricity used by the memory means the core used more), and one slot vs two slot coolers, as examples, but the basic laws of physics cannot be thrown out the window because you feel like doing it, nor can idiotic ideas like framerate come close to predicting core temp and it's heat density at load.

Older cpu's may have horrible framerates and horribly high temps, for instance. The 4850 frames do not equal the 4870's, but their core temp/heat density envelope is very close to indentical ( SAME CORE SIZE > the 4850 having some die shaders disabled and ddr3, the 4870 with ddr5 full core active more watts for mem and shaders, but the same PHYSICAL ISSUES - small core, high wattage for area, high heat)

erple2 - Tuesday, September 29, 2009 - link

I didn't say that the 3870 was the most efficient card. I was talking about the 5870. If you actually read what I had typed, I did mention that you have to look at how much work the card is doing while consuming that amount of power, not just temperatures and wattage.You sir, are a Nazi.

Actually, once you start talking about heat density at load, you MUST look at the efficiency of the card at converting electricity into whatever it's supposed to be doing (other than heating your office). Sadly, the only real way that we have to abstractly measure the work the card is doing is "FPS". I'm not saying that FPS predict core temperature.

SiliconDoc - Wednesday, September 30, 2009 - link

No, the efficiency of conversion you talk about has NOTHING to do with core temp AT ALL. The card could be massively efficient or inefficient at produced framerate, or just ERROR OUT with a sick loop in the core, and THAT HAS ABSOLUTELY NOTHING TO DO WITH THE CORE TEMP. IT RESTS ON WATTS CONSUMED EVEN IF FRAMERATE OUTPUT IS ZERO OR 300SECOND.(your mind seems to have imagined that if the red god is slinging massive frames "out the dvi port" a giant surge of electricity flows through it to the monitor, and therefore "does not heat the card")

I suggest you examine that lunatic red notion.

What YOU must look at is a red rooster rooter rimshot, in order that your self deception and massive mistake and face saving is in place, for you. At least JaredWalton had the sense to quietly skitter away.

Well, being wrong forever and never realizing a thing is perhaps the worst road to take.

PS - Being correct and making sure the truth is defended has nothing to do with some REDEYE cleche, and I certainly doubt the Gregalouge would embrace red rooster canada card bottom line crumbled for years ever more in a row, and diss big green corporate profits, as we both obviously know.

" at converting electricity into whatever it's supposed to be doing (other than heating your office). "

ONCE IT CONVERTS ELECTRICITY, AS IN "SHOWS IT USED MORE WATTS" it doesn't matter one ding dang smidgen what framerate is,

it could loop sand in the core and give you NO screeen output,

and it would still heat up while it "sat on it's lazy", tarding upon itself.

The card does not POWER the monitor and have the monitor carry more and more of the heat burden if the GPU sends out some sizzly framerates and the "non-used up watts" don't go sailing out the cards connector to the monitor so that "heat generation winds up somewhere else".

When the programmers optimize a DRIVER, and the same GPU core suddenly sends out 5 more fps everything else being the same, it may or may not increase or decrease POWER USEAGE. It can go ANY WAY. Up, down, or stay the same.

If they code in more proper "buffer fills" so the core is hammered solid, instead of flakey filling, the framerate goes up - and so does the temp!

If they optimize for instance, an algorythm that better predicts what does not need to be drawn as it rests behind another image on top of it, framerate goes up, while temp and wattage used GOES DOWN.

---

Even with all of that, THERE IS ONLY ONE PLACE FOR THE HEAT TO ARISE... AND IT AIN'T OUT THE DANG CABLE TO THE MONITOR!

SiliconDoc - Friday, September 25, 2009 - link

You can modify that, or be more accurate, by using core mass, (including thickness of the competing dies) - since the core mass is what consumes the electricity, and generates heat. A smaller mass (or die size, almost exclusively referred to in terms of surface area with the assumption that thickness is identical or near so) winds up getting hotter in terms of degrees of Celcius when consuming a similar amount of electricity.Doesn't matter if one frame, none, or a thousand reach your eyes on the monitor.

That's reality, not hokum. That's why ATI cores run hotter, they are smaller and consume a similar amount of electricty, that winds up as heat in a smaller mass, that means hotter.

Also, in actuality, the ATI heatsinks in a general sense, have to be able to dissipate more heat with less surface area as a transfer medium, to maintain the same core temps as the larger nvidia cores and HS areas, so indeed, should actually be "better stock" fans and HS.

I suspect they are slightly better as a general rule, but fail to excel enough to bring core load temps to nvidia general levels.

erple2 - Friday, September 25, 2009 - link

You understand that if there were no heatsink/cooling device on a GPU, it would heat up to crazy levels, far more than would be "healthy" for any silicon part, right? And you understand that measuring the efficiency of a part involves a pretty strong correlation between the input power draw of the card vs. the work that the card produces (which we can really only measure based on the output of the card, namely FPS), right?So I'm not sure that your argument means anything at all?

Curiously, the output wattage listed is for the entire system, not just for the card. Which means that the actual differences between the ATI cards vs. the nvidia cards is even larger (as a percentage, at least). I don't know what the "baseline" power consumption of the system (sans video card) is for the system acting as the test bed is.

Ultimately, the amount of electricity running through the GPU doesn't necessarily tell you how much heat the processors generate. It's dependent on how much of that power is "wasted" as heat energy (that's Thermodynamics for you). The only way to really measure the heat production of the GPU is to determine how much power is "wasted" as heat. Curiously, you can't measure that by measuring the temperature of the GPU. Well, you CAN, but you'd have to remove the Heatsink (and Fan). Which, for ANY GPU made in the last 15 years, would cook it. Since that's not a viable alternative, you simply can't make broad conclusions about which chip is "hotter" than another. And that is why your conclusions are inconclusive.

BTW, the 5870 consumes "less" power than the 275, 285 and 295 GPUs (at least, when playing WoW).

I understand that there may be higher wattage per square millimeter flowing through the 5870 than the GTX cards, but I don't see how that measurement alone is enough to state whether the 5870 actually gets hotter.

SiliconDoc - Saturday, September 26, 2009 - link

Take a look at SIZE my friend.http://www.hardforum.com/showthread.php?t=1325165">http://www.hardforum.com/showthread.php?t=1325165

There's just no getting around the fact that the more joules of heat in any time period (wattage used!= amount of joules over time!) that go into a smaller area, the hotter it gets, faster !

Nothing changes this, no red rooster imagination will ever change it.

SiliconDoc - Saturday, September 26, 2009 - link

NO, WRONG." Ultimately, the true measure of how much waste heat a card generates will have to look at the power draw of the card, tempered with the output work that it's doing (aka FPS in whatever benchmark you're looking at)."

NO, WRONG.

---

Look at any of the cards power draw in idle or load. They heat up no matter how much "work" you claim they do, by looking at any framerate, because they don't draw the power unless they USE THE POWER. That's the law that includes what useage of electricity MEANS for the law of thermodynamics, or for E=MC2.

DUHHHHH.

---

If you're so bent on making idiotic calculations and applying them to the wrong ideas and conclusions, why don't you take core die size and divide by watts (the watts the companies issue or take it from the load charts), like you should ?

I know why. We all know why.

---

The same thing is beyond absolutely apparent in CPU's, their TDP, their die size, and their heat envelope, including their nm design size.

DUHHH. It's like talking to a red fanboy who cannot face reality, once again.