AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

More GDDR5 Technologies: Memory Error Detection & Temperature Compensation

As we previously mentioned, for Cypress AMD’s memory controllers have implemented a greater part of the GDDR5 specification. Beyond gaining the ability to use GDDR5’s power saving abilities, AMD has also been working on implementing features to allow their cards to reach higher memory clock speeds. Chief among these is support for GDDR5’s error detection capabilities.

One of the biggest problems in using a high-speed memory device like GDDR5 is that it requires a bus that’s both fast and fairly wide - properties that generally run counter to each other in designing a device bus. A single GDDR5 memory chip on the 5870 needs to connect to a bus that’s 32 bits wide and runs at base speed of 1.2GHz, which requires a bus that can meeting exceedingly precise tolerances. Adding to the challenge is that for a card like the 5870 with a 256-bit total memory bus, eight of these buses will be required, leading to more noise from adjoining buses and less room to work in.

Because of the difficulty in building such a bus, the memory bus has become the weak point for video cards using GDDR5. The GPU’s memory controller can do more and the memory chips themselves can do more, but the bus can’t keep up.

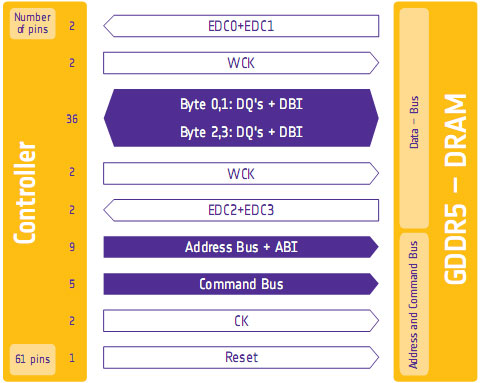

To combat this, GDDR5 memory controllers can perform basic error detection on both reads and writes by implementing a CRC-8 hash function. With this feature enabled, for each 64-bit data burst an 8-bit cyclic redundancy check hash (CRC-8) is transmitted via a set of four dedicated EDC pins. This CRC is then used to check the contents of the data burst, to determine whether any errors were introduced into the data burst during transmission.

The specific CRC function used in GDDR5 can detect 1-bit and 2-bit errors with 100% accuracy, with that accuracy falling with additional erroneous bits. This is due to the fact that the CRC function used can generate collisions, which means that the CRC of an erroneous data burst could match the proper CRC in an unlikely situation. But as the odds decrease for additional errors, the vast majority of errors should be limited to 1-bit and 2-bit errors.

Should an error be found, the GDDR5 controller will request a retransmission of the faulty data burst, and it will keep doing this until the data burst finally goes through correctly. A retransmission request is also used to re-train the GDDR5 link (once again taking advantage of fast link re-training) to correct any potential link problems brought about by changing environmental conditions. Note that this does not involve changing the clock speed of the GDDR5 (i.e. it does not step down in speed); rather it’s merely reinitializing the link. If the errors are due the bus being outright unable to perfectly handle the requested clock speed, errors will continue to happen and be caught. Keep this in mind as it will be important when we get to overclocking.

Finally, we should also note that this error detection scheme is only for detecting bus errors. Errors in the GDDR5 memory modules or errors in the memory controller will not be detected, so it’s still possible to end up with bad data should either of those two devices malfunction. By the same token this is solely a detection scheme, so there are no error correction abilities. The only way to correct a transmission error is to keep trying until the bus gets it right.

Now in spite of the difficulties in building and operating such a high speed bus, error detection is not necessary for its operation. As AMD was quick to point out to us, cards still need to ship defect-free and not produce any errors. Or in other words, the error detection mechanism is a failsafe mechanism rather than a tool specifically to attain higher memory speeds. Memory supplier Qimonda’s own whitepaper on GDDR5 pitches error correction as a necessary precaution due to the increasing amount of code stored in graphics memory, where a failure can lead to a crash rather than just a bad pixel.

In any case, for normal use the ramifications of using GDDR5’s error detection capabilities should be non-existent. In practice, this is going to lead to more stable cards since memory bus errors have been eliminated, but we don’t know to what degree. The full use of the system to retransmit a data burst would itself be a catch-22 after all – it means an error has occurred when it shouldn’t have.

Like the changes to VRM monitoring, the significant ramifications of this will be felt with overclocking. Overclocking attempts that previously would push the bus too hard and lead to errors now will no longer do so, making higher overclocks possible. However this is a bit of an illusion as retransmissions reduce performance. The scenario laid out to us by AMD is that overclockers who have reached the limits of their card’s memory bus will now see the impact of this as a drop in performance due to retransmissions, rather than crashing or graphical corruption. This means assessing an overclock will require monitoring the performance of a card, along with continuing to look for traditional signs as those will still indicate problems in memory chips and the memory controller itself.

Ideally there would be a more absolute and expedient way to check for errors than looking at overall performance, but at this time AMD doesn’t have a way to deliver error notices. Maybe in the future they will?

Wrapping things up, we have previously discussed fast link re-training as a tool to allow AMD to clock down GDDR5 during idle periods, and as part of a failsafe method to be used with error detection. However it also serves as a tool to enable higher memory speeds through its use in temperature compensation.

Once again due to the high speeds of GDDR5, it’s more sensitive to memory chip temperatures than previous memory technologies were. Under normal circumstances this sensitivity would limit memory speeds, as temperature swings would change the performance of the memory chips enough to make it difficult to maintain a stable link with the memory controller. By monitoring the temperature of the chips and re-training the link when there are significant shifts in temperature, higher memory speeds are made possible by preventing link failures.

And while temperature compensation may not sound complex, that doesn’t mean it’s not important. As we have mentioned a few times now, the biggest bottleneck in memory performance is the bus. The memory chips can go faster; it’s the bus that can’t. So anything that can help maintain a link along these fragile buses becomes an important tool in achieving higher memory speeds.

327 Comments

View All Comments

maomao0000 - Sunday, October 11, 2009 - link

http://www.myyshop.com">http://www.myyshop.comQuality is our Dignity; Service is our Lift.

Myyshop.com commodity is credit guarantee, you can rest assured of purchase, myyshop will

provide service for you all, welcome to myyshop.com

Air Jordan 7 Retro Size 10 Blk/Red Raptor - $34

100% Authentic Brand New in Box DS Air Jordan 7 Retro Raptor colorway

Never Worn, only been tried on the day I bought them back in 2002

$35Firm; no trades

http://www.myyshop.com/productlist.asp?id=s14">http://www.myyshop.com/productlist.asp?id=s14 (Jordan)

http://www.myyshop.com/productlist.asp?id=s29">http://www.myyshop.com/productlist.asp?id=s29 (Nike shox)

shaolin95 - Wednesday, October 7, 2009 - link

So Eyefinity may use 100 monitors but if we are still gaming on the flat plant then it makes no difference to me.Come on ATI, go with the real 3D games already..been waiting since the Radeon 64 SE days for you to get on with it.... :-(

GTX 295 for this boy as it is the only way to real 3D on a 60" DLP.

Nice that they have a fast product at good prices to keep the competition going. If either company goes down we all lose so support them both! :-)

Regards

raptorrage - Tuesday, October 6, 2009 - link

wow what a joke this review is but that i mean the reviewer stance on the 5870 sounds like he is a nvidia fan just because it like what 2-3fps off of the gtx 295 doesn't actually mean it can't catch that gpu as the driver updates come out and get the gpu to actually compete against that gpu and if i remember wasn't the GTX 295 the same when it came out .. its was good but it wasn't where we all thought it should have been then BAM a few months go by and it finds the performance it was missingi don't know if this was a fail on anandtech or the testing practices but i question them as i've read many other review sites and they had a clear view where the 5870 / GTX 295 where neck N neck as i've seen them first hand so i go ahead and state them here head 2 head @ 1920x1200 but at 2560x1600 the dual gpu cards do take the top slot but that is expected but it isn't as big as a margin as i see it.

and clearly he missed the whole point YES the 5870 dose compete with the GTX 295 i just believe your testing practices do come into question here because i've seen many sites where they didn't form the opinion that you have here it seems completely dismissive like AMD has failed i just don't see that in my opinion - I'll just take this review with a gain of salt as its completely meaningless

dieselcat18 - Saturday, October 3, 2009 - link

@Silicon DocNvidia fan-boy, troll, loser....take your gforce cards and go home...we can now all see how terrible ATi is thanks you ...so I really don't understand why people are beating down their doors for the 5800 series, just like people did for the 4800 and 3800 cards. I guess Nvidia fan-boy trolls like you have only one thing left to do and that's complain and cry like the itty-bitty babies that some of you are about the competition that's beating you like a drum.....so you just wait for your 300 series cards to be released (can't wait to see how many of those are available) so you can pay the overpriced premiums that Nvidia will be charging AGAIN !...hahaha...just like all that re-badging BS they pulled with the 9800 and 200 cards...what a joke !.. Oh my, I must say you have me in a mood and the ironic thing is I do like Nvidia as much as ATi, I currently own and use both. I just can't stand fools like you who spout nothing but mindless crap while waving your team flag (my card is better than your's..WhaaWhaaWhaa)...just take yourself along with your worthless opinions and slide back under that slimly rock you came from.

dieselcat18 - Saturday, October 3, 2009 - link

@Silicon DocNvidia fan-boy, troll, loser....take your gforce cards and go home...we can now all see how terrible ATi is thanks you ...so I really don't understand why people are beating down their doors for the 5800 series, just like people did for the 4800 and 3800 cards. I guess Nvidia fan-boy trolls like you have only one thing left to do and that's complain and cry like the itty-bitty babies that some of you are about the competition that's beating you like a drum.....so you just wait for your 300 series cards to be released (can't wait to see how many of those are available) so you can pay the overpriced premiums that Nvidia will be charging AGAIN !...hahaha...just like all that re-badging BS they pulled with the 9800 and 200 cards...what a joke !.. Oh my, I must say you have me in a mood and the ironic thing is I do like Nvidia as much as ATi, I currently own and use both. I just can't stand fools like you who spout nothing but mindless crap while waving your team flag (my card is better than your's..WhaaWhaaWhaa)...just take yourself along with your worthless opinions and slide back under that slimly rock you came from.

Scali - Thursday, October 1, 2009 - link

I have the GPU Computing SDK aswell, and I ran the Ocean test on my 8800GTS320. I got 40 fps, with the card at stock, with 4xAA and 16xAF on. Fullscreen or windowed didn't matter.How can your score be only 47 fps on the GTX285? And why does the screenshot say 157 fps on a GTX280?

157 fps is more along the lines of what I'd expect than 47 fps, given the performance of my 8800GTS.

Ryan Smith - Thursday, October 1, 2009 - link

Full screen, 2560x1600 with everything cranked up. At that resolution, it can be a very rough benchmark.The screenshot you're seeing is just something we took in windowed mode with the resolution turned way down so that we could fit a full-sized screenshot of the program in to our document engine.

Scali - Friday, October 2, 2009 - link

I've just checked the sourcecode and experimented a bit with changing some constants.The CS part always uses a dimension of 512, hardcoded, so not related to the screen size.

So the CS load is constant, the larger you make the window, the less you measure the GPGPU-performance, since it will become graphics-limited.

Technically you should make the window as small as possible to get a decent GPGPU-benchmark, not as large as possible.

Scali - Friday, October 2, 2009 - link

Hum, I wonder what you're measuring though.I'd have to study the code, see if higher resolutions increase only the onscreen polycount, or also the GPGPU-part of generating it.

Scali - Thursday, October 1, 2009 - link

That's 152 fps, not 257, sorry.