AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

AA Image Quality & Performance

With HL2 unsuitable for use in assessing image quality, we will be using Crysis: Warhead for the task. Warhead has a great deal of foliage in parts of the game which creates an immense amount of aliasing, and along with the geometry of local objects forms a good test for anti-aliasing quality. Look in particular at the leaves both to the left and through the windshield, along with aliasing along the frame, windows, and mirror of the vehicle. We’d also like to note that since AMD’s SSAA modes do not work in DX10, this is done in DX9 mode instead.

|

AMD Radeon HD 5870

|

AMD Radeon HD 4870

|

NVIDIA GTX 280

|

| No AA | ||

| 2X MSAA | ||

| 4X MSAA | ||

| 8X MSAA | ||

| 2X MSAA +AAA | 2X MSAA +AAA | 2X MSAA + SSTr |

| 4X MSAA +AAA | 4X MSAA +AAA | 4X MSAA + SSTr |

| 8X MSAA +AAA | 8X MSAA +AAA | 8X MSAA + SSTr |

| 2X SSAA | ||

| 4X SSAA | ||

| 8X SSAA |

From an image quality perspective, very little has changed for AMD compared to the 4890. With MSAA and AAA modes enabled the quality is virtually identical. And while things are not identical when flipping between vendors (for whatever reason the sky brightness differs), the resulting image quality is still basically the same.

For AMD, the downside to this IQ test is that SSAA fails to break away from MSAA + AAA. We’ve previously established that SSAA is a superior (albeit brute force) method of anti-aliasing, but we have been unable to find any scene in any game that succinctly proves it. Shader aliasing should be the biggest difference, but in practice we can’t find any such aliasing in a DX9 game that would be obvious. Nor is Crysis Warhead benefitting from the extra texture sampling here.

From our testing, we’re left with the impression that for a MSAA + AAA (or MSAA + SSTr for NVIDIA) is just as good as SSAA for all practical purposes. Much as with the anisotropic filtering situation we know through technological proof that there is better method, but it just isn’t making a noticeable difference here. If nothing else this is good from a performance standpoint, as MSAA + AAA is not nearly as hard on performance as outright SSAA is. Perhaps SSAA is better suited for older games, particularly those locked at lower resolutions?

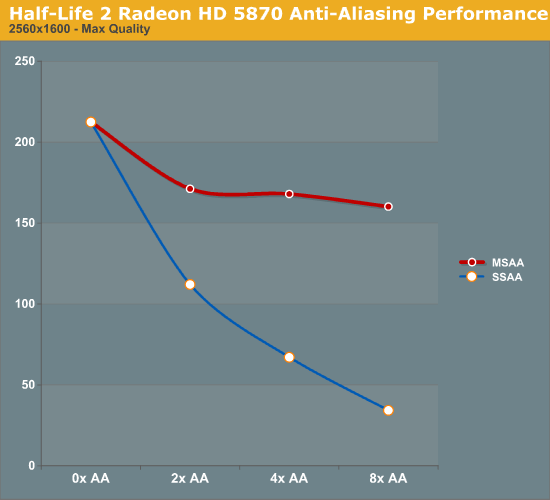

For our performance data, we have two cases. We will first look at HL2 on only the 5870, which we ran before realizing the quality problem with Source-engine games. We believe that the performance data is still correct in spite of the visual bug, and while we’re not going to use it as our only data, we will use it as an example of AA performance in an older title.

As a testament to the rendering power of the 5870, even at 2560x1600 and 8x SSAA, we still get a just-playable framerate on HL2. To put things in perspective, with 8x SSAA the game is being rendered at approximately 32MP, well over the size of even the largest possible single-card Eyefinity display.

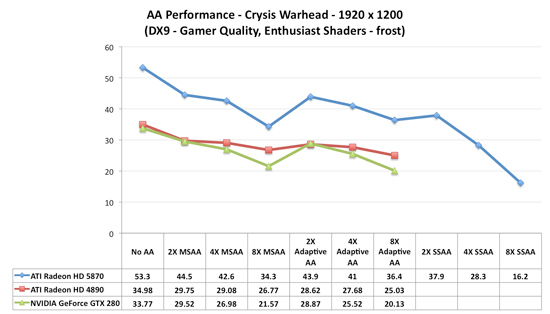

Our second, larger performance test is Crysis: Warhead. Here we are testing the game on DX9 mode again at a resolution of 1920x1200. Since this is a look at the impact of AA on various architectures, we will limit this test to the 5870, the GTX 280, and the Radeon HD 4890. Our interest here is in performance relative to no anti-aliasing, and whether different architectures lose the same amount of performance or not.

Starting with the 5870, moving from 0x AA to 4x MSAA only incurs a 20% drop in performance, while 8x MSAA increases that drop to 35%, or 80% of the 4x MSAA performance. Interestingly, in spite of the heavy foliage in the scene, Adaptive AA has virtually no performance hit over regular MSAA, coming in at virtually the same results. SSAA is of course the big loser here, quickly dropping to unplayable levels. As we discussed earlier, the quality of SSAA is no better than MSAA + AAA here.

Moving on, we have the 4890. While the overall performance is lower, interestingly enough the drop in performance from MSAA is not quite as much, at only 17% for 4x MSAA and 25% for 8x MSAA. This makes the performance of 8x MSAA relative to 4x MSAA 92%. Once again the performance hit from enabling AAA is miniscule, at roughly 1 FPS.

Finally we have the GTX 280. The drop in performance here is in line with that of the 5870; 20% for 4x MSAA, 36% for 8x MSAA, with 8x MSAA offering 80% of the performance. Even enabling supersample transparency AA only knocks off 1 FPS, just like AAA under the 5870.

What this leaves us with are very curious results. On a percentage basis the 5870 is no better than the GTX 280, which isn’t an irrational thing to see, but it does worse than the 4890. At this point we don’t have a good explanation for the difference; perhaps it’s a product of early drivers or the early BIOS? It’s something that we’ll need to investigate at a later date.

Wrapping things up, as we discussed earlier AMD has been pitching the idea of better 8x MSAA performance in the 5870 compared to the 4800 series due to the extra cache. Although from a practical perspective we’re not sold on the idea that 8x MSAA is a big enough improvement to justify any performance hit, we can put to rest the idea that the 5870 is any better at 8x MSAA than prior cards. At least in Crysis: Warhead, we’re not seeing it.

327 Comments

View All Comments

RubberJohnny - Thursday, September 24, 2009 - link

Well silicondoc you sure have some hatred for ATI/love for nvidia.It's almost as if you work for the green team...

You seem to have all this time on your hands to go around the net looking for links to spread FUD...sitting on new egg watching these cards come in and out of stock like you have a vested interest in seeing ATI fail...unlike any sane person it appears you want nvidia to have a monopoly on the industry?

Maybe you are privy to some inside info over at nvidia and know they have nothing to counter the 5870 with?

Maybe the cash they paid you to spin these BS comments would have been better spent on R&D?

SiliconDoc - Thursday, September 24, 2009 - link

That's a nice personal, grating, insulting ripppp, it's almost funny, too.---

The real problems remain.

I bring up this stuff because of course, no one else will, it is almost forbidden. Telling the truth shouldn't be that hard, and calling it fairly and honestly should not be such a burden.

I will gladly take correction when one of you noticing insulters has any to offer. Of course, that never comes.

Break some new ground, won't you ?

I don't think you will, nor do I think anyone else will - once again, that simply confirms my factual points.

I guess I'll give you a point for complaining about delivery, if that's what you were doing, but frankly, there are a lot of complainers here no different - let's take for instance the ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275 article here.

http://www.anandtech.com/video/showdoc.aspx?i=3539">http://www.anandtech.com/video/showdoc.aspx?i=3539

Boy, the red fans went into rip mode, and Anand came in and changed the articles (Derek's) words and hence "result", from GTX275 wins to ATI4890 wins.

--

No, it's not just me, it's just the bias here consistently leans to ati, and wether it's rooting for the underdog that causes it, or the brooding undercurrent hatred that surfaces for "the bigshot" "greedy" "ripoff artist" "nvidia overchargers" "industry controlling and bribing" "profit demon" Nvidia, who knows...

I'm just not afraid to point it out, since it's so sickening, yes, probably just to me, "I'm sure".

How about this glaring one I have never pointed out even to this day, but will now:

ATI is ALWAYS listed first, or "on top" - and of course, NVIDIA, second, and it is no doubt, in the "reviewer's minds" because of "the alphabet", and "here we go in alphabetical order".

A very, very convenient excuse, that quite easily causes a perception bias, that is quite marked for the readers.

But, that's ok.

---

So, you want to tell me why I shouldn't laugh out loud when ATI uses NVIDIA cards to develope their "PhysX" competition Bullet ?

ROFLMAO

I have heard 100 times here (from guess whom) that the ati has the wanted "new technology", so will that same refrain come when NVIDIA introduces their never before done MIMD capable cores in a few months ? LOL

I can hardly wait to see the "new technology" wannabes proclaiming their switched fealty.

Gee sorry for noticing such things, I guess I should be a mind numbed zombie babbling along with the PC required fanning for ati ?

silverblue - Thursday, September 24, 2009 - link

No; if he did work for nVidia, he'd be far better informed and far less prone to using the phrase "red rooster" every five seconds.crackshot91 - Wednesday, September 23, 2009 - link

Any possibility of benchmarks with a core 2 duo?I wanna know if it will be necessary to upgrade to an i5 or i7 (All new mobo) to see big performance gains over my 8800GT. Will a C2D E6750 @ 3.2GHz bottleneck it?

Ryan Smith - Wednesday, September 23, 2009 - link

Our recent Core i7 860 article should do an adequate job of answering that question. Several of the benchmarks were taken right out of this article.therealnickdanger - Wednesday, September 23, 2009 - link

You dedicated a full page to the flawless performance of its A/V output, but didn't mention it in the "features" part of the conclusion. It's a very powerful feature, IMO. Granted, this card may be a tad too hot and loud to find a home in a lot of HTPCs, but it's still an awesome feature and you should probably append your conclusion... just a suggestion though.Ultimately, I have to admit to being a little disappointed by the performance of this card. All the Eyefinity hype and playable framerates at massive 7000x3000 resolutions led me to believe that this single card would scale down and simply dominate everything at the 30" level and below. It just seems logical, so I was taken aback when it was beat by, well, anything else. I expected the 5870 and 5870CF to be at the top of every chart. Oh well.

Awesome article though! I'm sure there's a 5850 in my future!

MrMom - Wednesday, September 23, 2009 - link

Does anyone have a good explanation why the massive HD5870 is still slower/@par with the GTX295?Thanks

SiliconDoc - Thursday, September 24, 2009 - link

Yes, because the ati core "really sucks". It needs DDR5, and much higher MHZ to compete with Nvidia, and their what, over 1 year old core. LOL Even their own 4870x2.Or the 3 year old G92 vs the ddr3 "4850" the "topcore" before yesterday. (the ati topcore minus the well done 3m mhz+ REBRAND ring around the 4890)

That's the sad, actual truth. That's the truth many cannot bear to bring themselves to realize, and it's going to get WORSE for them very soon, with nvidia's next release, with ddr5, a 512 bit bus, and the NEW TECHNOLOGY BY NVIDIA THAT ATI DOES NOT HAVE MIMD capable cores.

Oh, I can hardly wait, but you bet I'm going to wait, you can count on that 100%.

Spoelie - Thursday, September 24, 2009 - link

because those are 2 480mm² dies, while this is only 1 360mm² die?Griswold - Wednesday, September 23, 2009 - link

Its one GPU instead of two, maybe?