AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

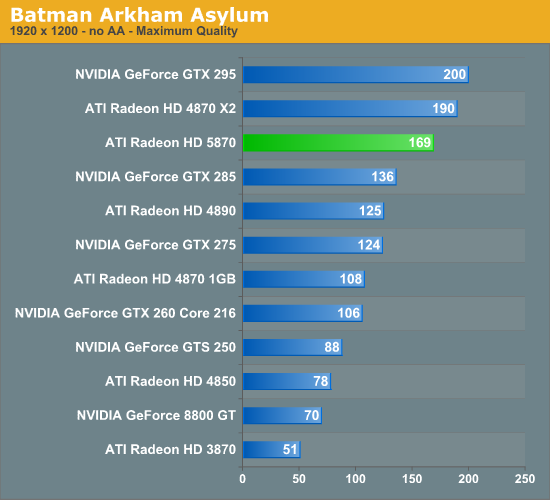

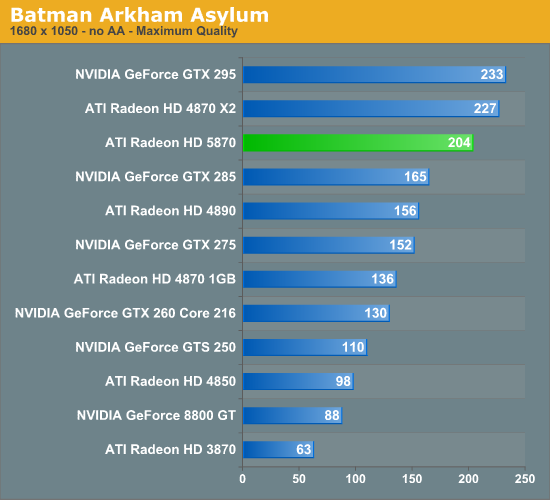

Batman: Arkham Asylum

Batman: Arkham Asylum is another brand-new PC game, and has been burning up the review charts. It’s an Unreal Engine 3 based game, something that’s not immediately obvious from just looking at it, which is rare for UE3 based games.

NVIDIA has put a lot of marketing muscle into the game as part of their The Way It’s Meant to Be Played program, and as a result it ships with PhysX support and 3D Vision support. Unfortunately NVIDIA’s influence has extended to its anti-aliasing abilities too, as its in-game selective AA abilities only work on NVIDIA’s cards. AMD’s cards can perform AA on the game, but only via traditional full screen anti-aliasing, which isn’t nearly as efficient. Because of this, this is the only game where we will not be using AA, as doing so produces meaningless results given the different AA modes used.

Without the use of AA, the performance in this game is best described as “runaway”. The 5870 turns in a score of 102fps, and even the GTS 250 can do just 53fps. However we’re also seeing the 5870’s performance pattern maintained here: it beats the single-GPU cards and loses to the multi-GPU cards.

327 Comments

View All Comments

avaughan - Wednesday, September 23, 2009 - link

Ryan,When you review the 5850 can you please specify memory size for all the comparison cards. At a guess the GTS 250 had 1GB and the 9800GT had 512 ?

Thanks

ThePooBurner - Wednesday, September 23, 2009 - link

With a full double the power and transistors and everything else including optimizations that should get more band for buck out of each one of those, why are we not seeing a full double the performance in games compared to the previous generation of cards?SiliconDoc - Wednesday, September 23, 2009 - link

Umm, because if you actually look at the charts, it's not "double everything".In fact, it's not double THE MOST IMPORTANT THING, bandwidth.

For pete sakes an OC'ed GTX260 core 192 get's to 154+ bandwidth rather easily, surpassing the 153.6 of ati's latest and greatest.

So, you have barely increased ram speed, same bus width... and the transistors on die are used up in "SHADERS" and ROPS....etc.

Where is all that extra shader processing going to go ?

Well, it goes to SSAA useage for instance, which provides ZERO visual quality improvement.

So, it goes to "cranking up the eye candy settings" at "not a very big framerate improvement".

--

So they just have to have a 384 or a 512 bus they are holding back. I dearly hope so.

They've already been losing a BILLION a year for 3+ years in a row, so the cost excuse is VERY VERY LAME.

I mean go check out the nvidia leaked stats, they've been all over for months - DDR5 and a 512 bit, with Multiple IMD, instead of the 5870 Single IMD.

If you want DOUBLE the performance wait for Nvidia. From DDR3 to DDR5, like going from 4850 to 4890, AND they (NVIDIA) have a whole new multiple instruction super whomp coming down the pike that is never done before, on their hated "gigantic brute force cores" (even bigger in the 40nm shrink -lol) that generally run 24C to 15C cooler than ati's electromigration heat generators.

---

So I mean add it up. Moving to DDR5, moving to multiple data, moving to 40nm, moving with 512 bit bus and AWESOME bandwidth, and the core is even bigger than the hated monster GT200. LOL

We're talking EPIC palmface.

--

In closing, buh bye ati !

silverblue - Thursday, September 24, 2009 - link

Let's wait for GT300 before we make any sweeping generalisations. The proof is in the pudding and it won't be long before we see it.And don't let the door hit you on the way out.

SiliconDoc - Thursday, September 24, 2009 - link

Oh mister, if we're waiting, that means NO BUYING the 5870, WAIT instead.Oh, yeah, no worries, it's not available.

---

Now, when my statements prove to be true, and your little crybaby snark with NO FACTS used for rebuttal are proven to be wasted stupidity, WILL YOU LEAVE AS YOU STATED YOU WANT ME TO ?

That's what I want to know. I want to know if your little wish applies to YOU.

Come on, I'll even accept a challenge on it. If I turn out to be wrong, I leave, if not you're gone.

If I'm correct, anyone waiting because of what I've pointed out, has been given an immense helping hand.

Which BY THE WAY, the entire article FAILED TO POINT OUT, because the red love goes so deep here.

-

But, you, the little red rooster ati fanner, wants me out.

ROFL - you people are JUST INCREDIBLE, it is absolutely incredible the crap you pull.

NOW, LET US KNOW WHAT LEAKED NVIDIA STATS I GOT INCORRECT WON'T YOU!?

No of course you won't !

silverblue - Friday, September 25, 2009 - link

Another problem I have with people like you is the unerring desire to rant and rave without reading things through. I said wait for GT300 before doing a proper comparison. Have you already forgotten the mess that was NV30? Paper specs do not necessarily equal reality. When the GT300 is properly previewed, even with an NDA in place, we can all judge for ourselves. People have a choice to buy what they like regardless of what you or I say.I'm not an ATI fanboy. I expended plenty of thought on what parts to get when I upgraded a few months back and that didn't just include CPU but motherboard and graphics. I was very close to getting a higher end Core2 Duo along with an nVidia graphics card; at the very least I considered an nVidia graphics card even when I decided on an AMD CPU and motherboard. In the end I felt I was getting better value by choosing an ATI solution. Doesn't make me a fanboy just because my money didn't end up on the nVidia balance sheet.

I'll take back the little comment about letting the door hit you on the way out. It wasn't designed to tell you to go away and not come back again, so my bad. I was annoyed at your ability to just attack a specific brand without any apparent form of objectivity. If you hate ATI, then you hate ATI, but do we really need to hear it all the time?

If the information you've posted about the GT300 is indeed accurate and comparable to what we've been told about the 58x0 series, then that's great, but you're going to need to lay it out in a more structured format so people can digest it more readily, as well as lay off the constant anti-ATI stance because appearing biased is not going to make people more receptive to your viewpoint. I remain sceptical that your leaked specs will end up being correct but in the end, GT300 is on its way and it'll be a monster regardless of whatever information you've posted here. I'm not going to pretend I know anything technical about GT300, but you must realise that what you've essentially done in this article is slate a working, existing product line that is being distributed to vendors as we speak in a manner that's much slower than ATI had intended yet you're attacking people for being interested in it over the GT300 which hasn't been reviewed yet, partly because you think the product is vapourware (which isn't really the case as people are getting hold of the 5870 but at a lower rate than ATI would like). Some people will choose to wait, some people will jump on the 58x0 bandwagon right now, but it's not for you to decide for them what they should buy.

Now relax, you're going to have a heart attack.

SiliconDoc - Wednesday, September 30, 2009 - link

What a LOAD OF CRAP.I don't have to outline anything, remember, ALL YOU PEOPLE cARE ABOUT IS GAMING FRAMERATE.

And that at "your price point" that doesn't include "the NVIDIA BALANCE SHEET". - which io of course, the STRANGEST WAY for a reddie to put it.

YOU JUST WANT ME TO SHUT UP. YOU DON'T WANT IT SAID. WE'LL I'M SAYING IT AGAIN, AND YOU FAILED TO ACCEPT MY CHALLENGE BECAUSE YOU'RE A CHICKEN, AND CENSOR !

---

Oh, do we have to hear it... blah blah blah blah...

--

YES SINCE THIS VERY ARTICEL WAS ABSOLUTELY IRRESPONSIBLE IN NOT PROPERLY ASSESSING THE COMPETITION.

CarrellK - Wednesday, September 23, 2009 - link

If a game ran almost entirely on the GPU, the scaling would be more of what you expect. You can put in a new GPU, but the CPU is no faster, main memory is no faster of bigger, the hard disk is no faster, PCIE is no faster, etc.The game code itself also limits scaling. For example the texture size can exceed the card's memory footprint, which results in performance sapping texture swaps. Each game introduces different bottlenecks (we can't solve them all).

We do our best to get linear scaling, but the fact is that we address less than a third of the game ecosystem. That we do better than 33% out of a possible 100% improvement is I think a testimony to our engineers.

BlackbirdCaD - Wednesday, September 23, 2009 - link

Why no load temp of 5870 in Crossfire??Load temp is much more important than idle temp.

There is lots of uninteresting stuff like soundlevel at idle with 5870 in crossfire, but the MOST IMPORTANT is missing: load temp with 5870 in crossfire.

SiliconDoc - Wednesday, September 23, 2009 - link

I just pulled up the chart on the 4870 CF, and although the 4870x2 was low 400's on load system power useage, the 4870 CF was 722 watts !So, I think your question may have some validity. I do believe the cards get a bit hotter in CF, and then you have the extra items on the PCB, the second slot used, the extra ram via the full amount on each card - all that adds up to more power useage, more heat in the case, and higher temps communicating with eachother. (resends for data on bus puases waitings, etc. ).

So there is something to your question.

---

Other than all that the basic answer is "red fan review site".

The ATI cards are HOTTER than the nvidia under load as a very, very wide general statement, that covers almost every card they both make, with FEW exceptions.