AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

by Ryan Smith on September 23, 2009 9:00 AM EST- Posted in

- GPUs

More GDDR5 Technologies: Memory Error Detection & Temperature Compensation

As we previously mentioned, for Cypress AMD’s memory controllers have implemented a greater part of the GDDR5 specification. Beyond gaining the ability to use GDDR5’s power saving abilities, AMD has also been working on implementing features to allow their cards to reach higher memory clock speeds. Chief among these is support for GDDR5’s error detection capabilities.

One of the biggest problems in using a high-speed memory device like GDDR5 is that it requires a bus that’s both fast and fairly wide - properties that generally run counter to each other in designing a device bus. A single GDDR5 memory chip on the 5870 needs to connect to a bus that’s 32 bits wide and runs at base speed of 1.2GHz, which requires a bus that can meeting exceedingly precise tolerances. Adding to the challenge is that for a card like the 5870 with a 256-bit total memory bus, eight of these buses will be required, leading to more noise from adjoining buses and less room to work in.

Because of the difficulty in building such a bus, the memory bus has become the weak point for video cards using GDDR5. The GPU’s memory controller can do more and the memory chips themselves can do more, but the bus can’t keep up.

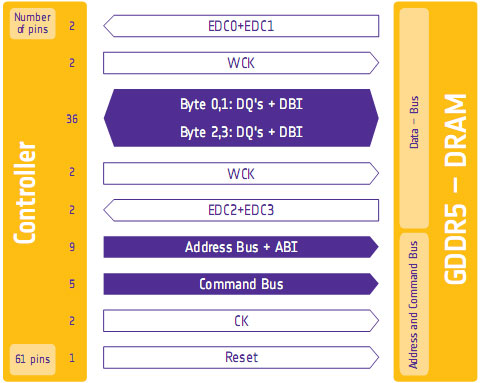

To combat this, GDDR5 memory controllers can perform basic error detection on both reads and writes by implementing a CRC-8 hash function. With this feature enabled, for each 64-bit data burst an 8-bit cyclic redundancy check hash (CRC-8) is transmitted via a set of four dedicated EDC pins. This CRC is then used to check the contents of the data burst, to determine whether any errors were introduced into the data burst during transmission.

The specific CRC function used in GDDR5 can detect 1-bit and 2-bit errors with 100% accuracy, with that accuracy falling with additional erroneous bits. This is due to the fact that the CRC function used can generate collisions, which means that the CRC of an erroneous data burst could match the proper CRC in an unlikely situation. But as the odds decrease for additional errors, the vast majority of errors should be limited to 1-bit and 2-bit errors.

Should an error be found, the GDDR5 controller will request a retransmission of the faulty data burst, and it will keep doing this until the data burst finally goes through correctly. A retransmission request is also used to re-train the GDDR5 link (once again taking advantage of fast link re-training) to correct any potential link problems brought about by changing environmental conditions. Note that this does not involve changing the clock speed of the GDDR5 (i.e. it does not step down in speed); rather it’s merely reinitializing the link. If the errors are due the bus being outright unable to perfectly handle the requested clock speed, errors will continue to happen and be caught. Keep this in mind as it will be important when we get to overclocking.

Finally, we should also note that this error detection scheme is only for detecting bus errors. Errors in the GDDR5 memory modules or errors in the memory controller will not be detected, so it’s still possible to end up with bad data should either of those two devices malfunction. By the same token this is solely a detection scheme, so there are no error correction abilities. The only way to correct a transmission error is to keep trying until the bus gets it right.

Now in spite of the difficulties in building and operating such a high speed bus, error detection is not necessary for its operation. As AMD was quick to point out to us, cards still need to ship defect-free and not produce any errors. Or in other words, the error detection mechanism is a failsafe mechanism rather than a tool specifically to attain higher memory speeds. Memory supplier Qimonda’s own whitepaper on GDDR5 pitches error correction as a necessary precaution due to the increasing amount of code stored in graphics memory, where a failure can lead to a crash rather than just a bad pixel.

In any case, for normal use the ramifications of using GDDR5’s error detection capabilities should be non-existent. In practice, this is going to lead to more stable cards since memory bus errors have been eliminated, but we don’t know to what degree. The full use of the system to retransmit a data burst would itself be a catch-22 after all – it means an error has occurred when it shouldn’t have.

Like the changes to VRM monitoring, the significant ramifications of this will be felt with overclocking. Overclocking attempts that previously would push the bus too hard and lead to errors now will no longer do so, making higher overclocks possible. However this is a bit of an illusion as retransmissions reduce performance. The scenario laid out to us by AMD is that overclockers who have reached the limits of their card’s memory bus will now see the impact of this as a drop in performance due to retransmissions, rather than crashing or graphical corruption. This means assessing an overclock will require monitoring the performance of a card, along with continuing to look for traditional signs as those will still indicate problems in memory chips and the memory controller itself.

Ideally there would be a more absolute and expedient way to check for errors than looking at overall performance, but at this time AMD doesn’t have a way to deliver error notices. Maybe in the future they will?

Wrapping things up, we have previously discussed fast link re-training as a tool to allow AMD to clock down GDDR5 during idle periods, and as part of a failsafe method to be used with error detection. However it also serves as a tool to enable higher memory speeds through its use in temperature compensation.

Once again due to the high speeds of GDDR5, it’s more sensitive to memory chip temperatures than previous memory technologies were. Under normal circumstances this sensitivity would limit memory speeds, as temperature swings would change the performance of the memory chips enough to make it difficult to maintain a stable link with the memory controller. By monitoring the temperature of the chips and re-training the link when there are significant shifts in temperature, higher memory speeds are made possible by preventing link failures.

And while temperature compensation may not sound complex, that doesn’t mean it’s not important. As we have mentioned a few times now, the biggest bottleneck in memory performance is the bus. The memory chips can go faster; it’s the bus that can’t. So anything that can help maintain a link along these fragile buses becomes an important tool in achieving higher memory speeds.

327 Comments

View All Comments

SiliconDoc - Wednesday, September 30, 2009 - link

No, it's the fact you tell LIES, and always in ati's favor, and you got caught, over and over again.That is WHAT HAS HAPPENED.

Now you catch hold of your senses for a moment, and supposedly all the crap you spewed is "ok".

SiliconDoc - Friday, September 25, 2009 - link

Once again, all that matters to YOU, is YOUR games for PC, and ONLY top sellers, and only YOUR OPINION on PhysX.However, after you claimed only 2 games, you went on to bloviate about Havok.

Now you've avoided entirely that issue. Am I to assume, as you have apparently WISHED and thrown about, that HAVOK does not function on NVidia cards? NO QUITE THE CONTRARY !

--

What is REAL, is that NVidia runs Havok AND PhysX just fine, and not only that but ATI DOES NOT.

Now, instead of supporting BOTH, you have singled out your object of HATRED, and spewed your infantile rants, your put downs, your empty comparisons (mere statements), then DEMAND that I show PhysX is worthwhile, with "golden sellers". LOL

It has been 1.5 years or so since Aegia acquisition, and of course, game developers turning anything out in just 6 short months are considered miracle workers.

The real problem oif course for you is ATI does not support PhysX, and when a rouge coder made it happen, NVidia supported him, while ATI came in and crushed the poor fella.

So much for "competition", once again.

Now, I'd demand you show where HAVOK is worthwhile, EXCEPT I'm not the type of person that slams and reams and screams against " a percieved enemy company" just because "my favorite" isn't capable, and in that sense, my favorite IS CAPABLE.

Now, PhysX is awesome, it's great, it's the best there is, and that may or may not change, but as for now, NO OTHER demonstrations (you tube and otherwise) can match it.

That's just a sad fact for you, and with so many maintaining your biased and arrogant demand for anything else, we may have another case of VHS instead of BETA, which of course, you would heartily celebrate, no matter how long it takes to get there.

LOL

Yes, it is funny. It's just hilarious. A few months ago before Mirror's Edge and Anand falling in love with PhysX in it, admittedly, in the article he posted, we had the big screamers whining ZERO.

Well, now a few months later you are whining TWO.

Get ready to whine higher. Yes, you have read about the uptick in support ? LOL

You people are really something.

Oh, I know, CUDA is a big fat zero according to you, too.

(please pass along your thoughts to higher education universities here in the USA, and the federal government national lab research facilites. Thanks)

SiliconDoc - Thursday, September 24, 2009 - link

Yes, another excuse monger. So you basically admit the text is biased, and claim all readers should see the charts and go by those. LOLSo when the text is biased, as you admit, how is it that the rest, the very core of the review is not ? You won't explain that either.

Furthermore, the assumption that competition leads to something better in technology for videocards quicker, fails the basic test that in terms of technology, there is a limit to how fast it proceeds forward, since scientific breakthroughs must come, and often don't come, for instance, new energy technologies, still struggling after decades to make a breakthrough, with endless billions spent, and not much to show for it.

Same here with videocards, there is a LIMIT to the advancement speed, and competition won't be able to exceed that limit.

Furthermore, I NEVER said prices won't be driven down by competition, and you falsely asserted that notion to me.

I DID however say, ATI ALSO IS KNOWN FOR OVERPRICING. (or rather unknown by the red fans, huh, even said by omission to have NOT COMMITTED that "huge sin", that you all blame only Nvidia for doing.)

So you're just WRONG once again.

Begging the other guy to "not argue" then mischaracterizing a conclusion from just one of my statements, ignoring the points made that prove your buddy wrong period, and getting the body of your idea concerning COMPETITION incorrect due to technological and scientific constraints you fail to include, is no way to "argue" at all.

I sure wish there was someone who could take on my points, but so far none of you can. Every time you try, more errors in your thinking are easily exposed.

A MONOPOLY, in let's take for instance, the old OIL BARRONS, was not stagnant, and included major advances in search and extraction, as Standard Oil history clearly testifies to.

Once again, the "pat" cleche' is good for some things ( for instance competing drug stores, for example ), or other such things that don't involve inaccesible technology that has not been INVENTED yet.

The fact that your simpleton attitude failed to note such anomolies, is clearly evidence that once again, thinking "is not reuired" for people like you.

Once again, the rebuttal has failed.

kondor999 - Thursday, September 24, 2009 - link

This is just sad, and I'm no fanboy. I really wanted a 5870, but only with 100% more speed than a GTX285 - not a lousy 33%. Definitely not worth me upgrading, so I guess ATI saved me some money. I'm certain that my 3 GTX280's in Tri-SLI will destroy 2 5870's in CF - although with slightly less compatability (an important advantage for ATI, but not nearly enough).Moricon - Thursday, September 24, 2009 - link

I have been a regular at Tomshardware for a while now, nad keep coming back to Anandtech time and again to read reviews I have already read on other sites, and this one is by far the best I have read so far, (guru3d, toms, firing squad, and many others)The 5870 looks awesome, but from an upgrade point of view, I guess my system will not really benefit from moving on from E7200 @3.8ghz 4gb 1066, HD4870 @850mhz 4400mhz on 1680x1050.

Such a shame that i dont have a larger monitor at the moment or I would have jumped immediately.

Looks like the path is q9550 and 5870 and 1920x1200 monitor or larger to make sense, then might as well go i7, i5, where do you stop..

Well done ATI, well done! But if history follows the Nvidia 3xx chip will be mindblowing compared!

djc208 - Thursday, September 24, 2009 - link

I was most surprised at how far behind the now 2-generation old 3870 is now (at least on these high-end games). Guess my next upgrade (after a SSD) should be a 5850 once the frenzy dies away.JonnyDough - Thursday, September 24, 2009 - link

They could probably use a 1.5 GB card. :(mapesdhs - Wednesday, September 23, 2009 - link

Ryan, any chance you could run Viewperf or other pro-app benchmarks

please? Some professionals use consumer hardware as a cheap way of

obtaining reasonable performance in apps like Maya, 3DS Max, ProE,

etc., so it would most interesting to know how the 5870 behaves when

running such tests, how it compares to Quadro and FireGL cards.

Pro-series boards normally have better performance for ops such as

antialiases lines via different drivers and/or different internal

firmware optimisations. Someday I figure perhaps a consumer card will

be able to match a pro card purely by accident.

Ian.

AmdInside - Wednesday, September 23, 2009 - link

Sorry if this has already been asked but does the 5870 support audio over Display Port? I am holding out for a card that does such a thing. I know it does it for HDMI but also want it to do it for Display Port.VooDooAddict - Wednesday, September 23, 2009 - link

Been waiting for a single gaming class card that can power more then 2 displays for quite some time. (The more then 2 monitors not necessarily for gaming.)The fact that this performs a noticeable bit better then my existing 4870 512MB is a bonus.